When it comes to inaccurate data, what’s worse: a false negative, or a false positive? The question is a little unfair, because of course, nobody wants either. But it does bring to mind the fact that false negatives are often regarded as the more problematic type of inaccuracy. A false negative means that something (usually bad) has been missed. What you sought to uncover is actually out there, but you didn’t find it. As in, an X-ray that didn’t pick up a suspicious tumor. Or a fingerprint analysis that didn’t correctly identify the criminal.

False positives, on the other hand, tend to be thought of as harmless false alarms. You thought you found something nefarious, but it’s actually benign. “The doctor told me I had a concerning diagnosis, but it turns out I’m healthy.” Phew!

However, in the realm of cybersecurity, false positives can be just as problematic as false negatives. Missing evidence of a malicious threat (your false negative) is bad, no doubt. But exhausting time and resources investigating something you think is malicious, but isn’t (your false positive)– that’s bad too.

False Positives Are Prevalent

New research finds that threat hunters view false positives as one of their top challenges. In our forthcoming State of Threat Hunting Report, which surveyed over 200 threat hunters in North America and Europe, respondents said that eliminating false positives was one of the top three things that would make their jobs easier. Approximately ⅓ of respondents said that more than 20% of their threat hunting results within the last year were false positives.

Why False Positives Are Problematic

1.Alert Fatigue and Desensitization

One of the most immediate and consequential impacts of false positives is alert fatigue. When security teams are inundated with a barrage of alerts, many of which turn out to be false alarms, the natural response is desensitization. Over time, teams may become complacent or, worse, ignore alerts altogether, creating an environment where genuine threats could slip through undetected.

2. Wasted Time and Effort

False positives demand teams’ attention, investigation, and resources. Every moment spent chasing down a benign event is a moment not spent on addressing real threats. This diversion of resources can lead to inefficiencies and unnecessary strain on cybersecurity teams, hindering their ability to focus on the actual security concerns at hand.

3. Erosion of Trust in Security Tools

The repeated occurrence of false positives erodes the trust that teams place in their security tools. When threat hunters can’t rely on the accuracy of their tools, it undermines the credibility of the entire security infrastructure. This erosion of trust can have far-reaching consequences, potentially leaving organizations vulnerable to actual threats.

4. Poor Decision-Making

In worst-case scenarios, false positives can prompt leadership to make unwise decisions. If a CISO believes that the organization faces a truly credible threat, or that it has been successfully breached, they might launch an incident response motion that involves alerting the C-suite, board members, or even customers. Walking back these kinds of alerts can diminish trust in the org’s cybersecurity team and leadership.

Common Causes of False Positives

1. Overly Aggressive Signatures

Security tools that employ overly aggressive, signature-based detection methods may trigger false positives by misinterpreting normal or benign activity as malicious. Tuning these signatures to strike the right balance between sensitivity and specificity is a crucial part of reducing the likelihood of false positives.

2. Lack of Context-Aware Analysis

Many security tools lack the contextual awareness necessary to differentiate between normal and suspicious activities. Without a nuanced understanding of an organization’s specific environment, these tools may flag routine actions as potential threats.

3. Stale, Incomplete Internet Intelligence

Effective threat hunting relies heavily on the quality and relevance of internet intelligence. Outdated or incomplete intelligence feeds can lead to misinterpretations that result in false positives. Using internet intelligence sources that are complete, accurate, and up-to-date (as in, data is refreshed daily rather than weekly) is paramount to ensuring accuracy and eliminating false positives.

In addition to the direct sources of internet intelligence threat hunters may rely on, it’s important to consider the intel that powers their threat hunting tools. Are the tools you’re using informed by reliable data?

False Positives Aren’t Inevitable

Threat hunters don’t have to accept that pervasive false positives “come with the territory.” By relying on fresh, complete internet intelligence, and by using tools with the ability to leverage context-aware analysis, false positives don’t have to perpetually plague your threat hunting investigations.

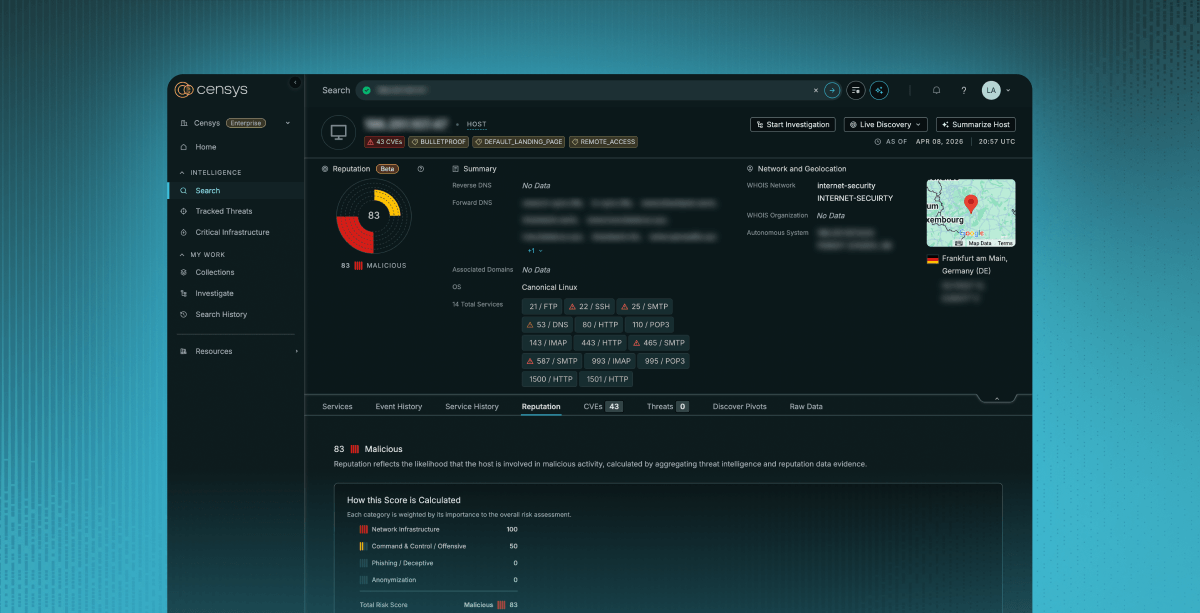

Learn more about how Censys’ proprietary, industry-leading internet intelligence helps reduce the likelihood of false positives.