TL;DR Findings

In under an hour using Censys data, we found 7,640 potential S3 buckets, 49 completely open (World Read + Write), 16 buckets with anonymous write, 1,235 with anonymous read access, and more.

Finding S3 Targets With Censys

Amazon S3 (Amazon Simple Storage Service) is a product that provides object storage over the web and is used by countless individuals and organizations worldwide. It is easy to use, but unfortunately, it is very easy to misconfigure. On December 20th, 2022, news hit that educational publisher McGraw Hill’s S3 buckets were exposed to the internet without the proper configuration, leaving over 100,000 students’ personal information up for grabs by hackers. And this isn’t a rare occurrence; it happens over and over and over again.

While Censys does not currently have a native S3 scanner, we can still utilize the data we collect to feed into other tools. When a user creates a bucket, it is given a unique name which is then translated into DNS; for example, if I named an S3 bucket “censys,” then that bucket would be available via the DNS name “censys.s3.amazonaws.com”. So before we can determine if an S3 bucket is publically accessible, we must know what name was given to the storage object itself.

Many security tools can assist users with finding open S3 buckets, but one of the more challenging parts is the discovery of targets (the bucket names). A standard method is using brute force name creation or random combinations of words and attempting to request the name from AWS. This method is how many malicious attackers find the low-hanging fruit of insecure S3 buckets.

Since Censys has scanned and indexed a massive number of hosts on the internet (around 276 million hosts and 2.1 billion services at the time of writing), we can leverage this data to find potential S3 bucket names, which we can then feed into the open-source S3 scanner tool S3Scanner to determine the types of access available.

First, we created a SQL query that dumped the contents of any HTTP body that included the word “s3” on the internet into another database containing metadata about the host. Then we wrote a python script that iterated through each of these HTTP response bodies and parsed out s3-like URLs to extract s3 bucket names, which are then written to a file.

Once this script was finished running, we used the output file containing just the bucket names as input to the S3Scanner tool:

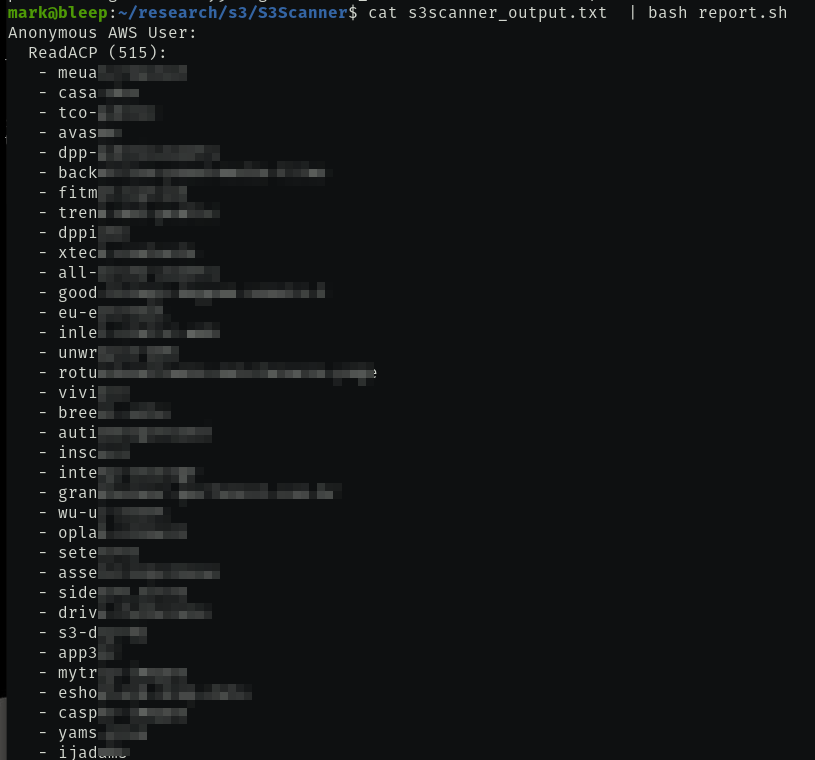

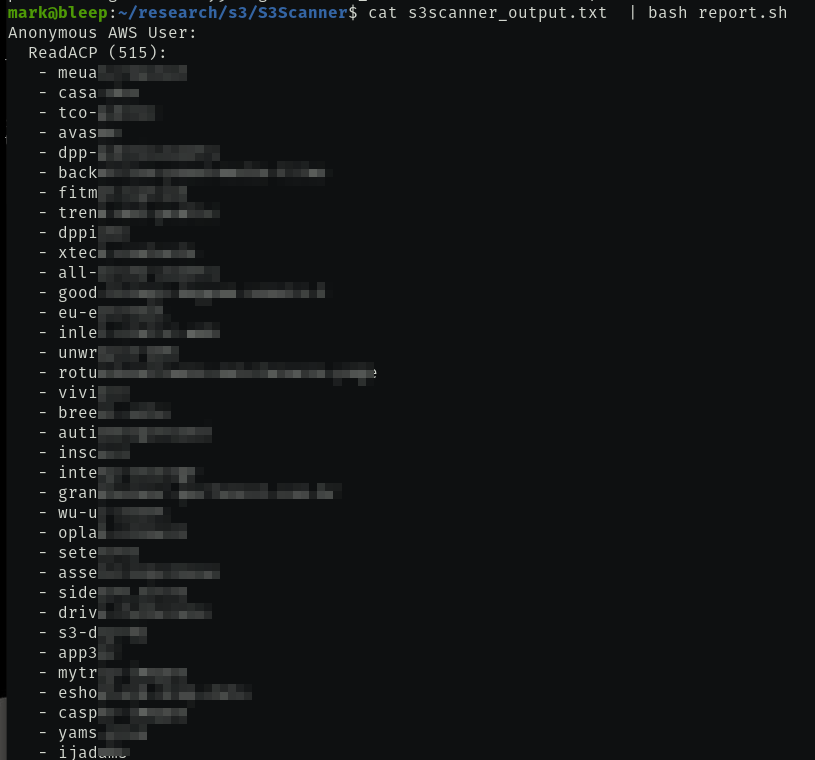

Finally, we needed a way to generate a report on S3Scanner’s output by creating a small bash script that processes the data and creates an aggregated view of the results.

Findings

We could positively identify 7,640 potential S3 targets in a short time without resorting to random name generation. After running S3Scanner on these results, we found the following:

- 49 S3 buckets allowed anonymous users complete control over the instance (Read/Write/ACP Read/ACP Write).

- 16 S3 buckets allowed anonymous users to write to the instance

- 18 S3 buckets allowed an anonymous user to write to the Access Control Policy (ACP)

- 515 S3 buckets allowed an anonymous user to read the ACP

- 1,235 S3 buckets were world-readable

What can be done?

Many great articles on the internet discuss other methods of analyzing the security of S3:

- Amazon’s S3 Best Practices

- SC Magazine’s article on Nine ways to secure AWS S3

- TrendMicro’s own Best Practices for Secure S3

- NetApp’s Article on How to Find Open Buckets and Keep Them Safe.

References

- Censys Search Query

- McGraw Hill’s S3 buckets Exposed Article

- S3Scanner

- Censys Python API

- Finding And Exploiting S3 Amazon Buckets For Bug Bounties

- GrayHatWarfare (A search engine for open buckets)