Executive Summary

- Google and Cloudflare recently released reports of a new denial of service attack dubbed “Rapid Reset,” leveraging HTTP/2’s stream cancellation functionality.

- This technique allows actors to engage in a “request, cancel” pattern at scale to produce extremely high-volume denial of service attacks. Servers running HTTP/2 that proxy traffic to backend servers are particularly susceptible to this attack.

- Over 555 million hosts on the Internet currently appear to have the ability to run HTTP/2, and thus may be vulnerable to this attack.

- While there are currently no workarounds for this issue, administrators with HTTP/2 enabled servers that proxy requests to a backend server can temporarily disable HTTP/2 on the frontend until vendors have created a workaround.

Looking Closer at the Attack Mechanics

On October 10, Google and Cloudflare released reports concerning abuse of a specific feature in the HTTP/2 protocol, leading to increased load and a potential denial-of-service on servers that cannot keep up with many concurrent requests.

Their reports go into detail about a scenario where an actor can circumvent restrictions put in place to limit the number of concurrent requests that are coming into an HTTP/2 server (for each client) by canceling a request right after it is made, effectively resetting the internal request counter for each cancellation request. This means that the actor can then infinitely spam new requests to the server without hitting these limits.

A well-optimized web server where the HTTP/2 service directly interfaces with the client, and no external data is retrieved via business logic (i.e., a server that does not forward or proxy requests to another server), will definitely see some increase in utilization, but nothing that the server shouldn’t be able to handle. The issue becomes more apparent when the HTTP/2 server attempts to proxy each request over some networked connection (like an Nginx server proxying connections to an API service running on Apache).

In this scenario, request data has to be buffered up by the proxy and then sent to a backend service; that backend service must then process that data and send the response back to the HTTP/2 server. In the meantime, while waiting on those proxied responses, multiple areas in the running process may be utilizing memory and resources that have yet to be cleaned up.

In short, a client sends a valid HTTP/2 request, the HTTP/2 server parses that request, sends the request to a backend server, and waits for a reply. However, the client immediately cancels that request, so the HTTP2 server also has to cancel the request to the backend, but by this time, the data and resources have already been allocated and used. And since that max request counter continually gets reset, an infinite number of incoming requests can come in without anything stopping them.

Google’s blog said it best with the following:

“…the client can open 100 streams and send a request on each of them in a single round trip; the proxy will read and process each stream serially, but the requests to the backend servers can again be parallelized. The client can then open new streams as it receives responses to the previous ones. This gives an effective throughput for a single connection of 100 requests per round trip, with similar round trip timing constants to HTTP/1.1 requests. This will typically lead to almost 100 times higher utilization of each connection.”

Aptly named the HTTP/2 “Rapid Reset Attack” (based on clients resetting each outgoing request), the methods described technically affect any HTTP/2 implementation, meaning this is not vendor or product-specific but a weakness in the protocol itself. And any product that supports HTTP/2 (presently) is susceptible to this attack.

In short, this attack puts a high amount of pressure between frontend servers and the backend servers they communicate with, like in heavily proxied environments. This is why most of this attack’s reporting is coming out of service providers that deal heavily in load-balancing and HTTP proxying, like Cloudflare and Google.

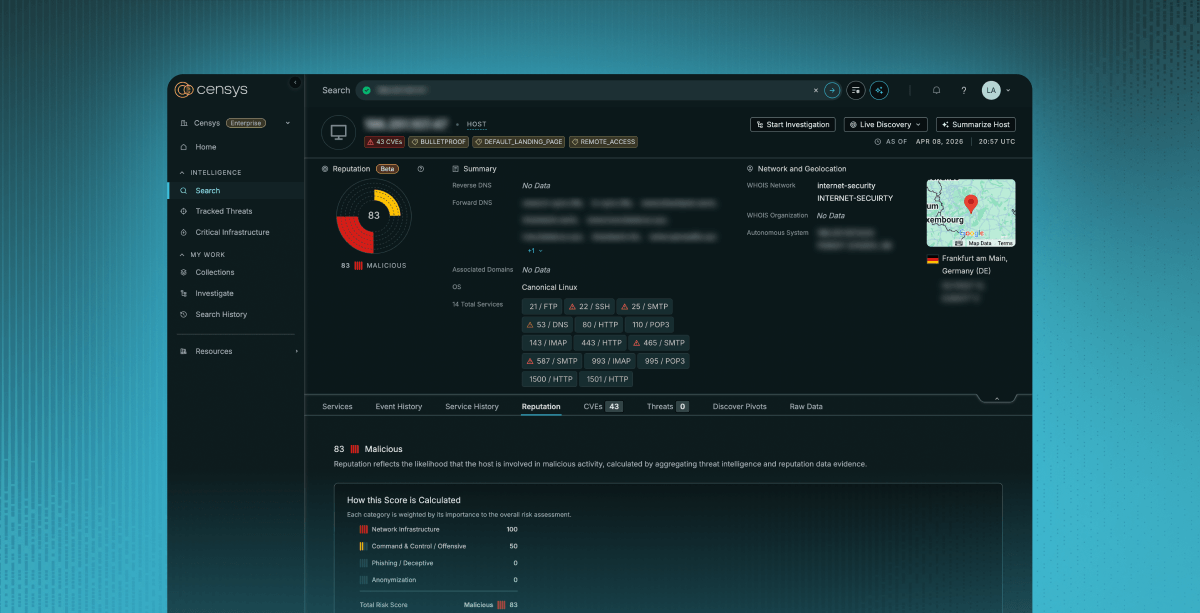

What Censys Sees

Censys can determine whether an HTTP service can accept and process incoming HTTP/2 requests but does not have any extra information about the underlying implementation outside of what the HTTP servers tell us is running (For example, a server header that tells us it’s Apache or Nginx). There are no concrete patches or workarounds at the time of writing. If we were to guess, this wouldn’t be a change in the underlying protocol but a workaround in the protocol implementations themselves. In either case, HTTP/2 protocol implementations will be modified, and host owners will need to update their servers.

Since there is not any specific semantic versioning or identification built into the HTTP2 protocol, even when a workaround or fix is put into place, Censys will still only be able to identify whether the HTTP/2 protocol is supported or not.

As of October 10, 2023, Censys observed 556.3M hosts running 719.8M HTTP/2 enabled servers. Note that this includes HTTP servers with the ability to upgrade to HTTP/2, but not necessarily all the ones that are currently running it.

Top 10 Autonomous Systems

| ASN | Autonomous System | Host Count | Service Count |

| 16509 | AMAZON-02 | 73.4M | 85.4M |

| 46606 | UNIFIEDLAYER-AS-1 | 29.2M | 57.5M |

| 63949 | AKAMAI-LINODE-AP Akamai Connected Cloud | 25.8M | 45.7M |

| 13335 | CLOUDFLARENET | 39.8M | 40.3M |

| 53831 | SQUARESPACE | 33.7M | 33.7M |

| 14618 | AMAZON-AES | 23.6M | 26.1M |

| 24940 | HETZNER-AS | 14.2M | 19.6M |

| 19871 | NETWORK-SOLUTIONS-HOSTING | 9.1M | 17.8M |

| 16276 | OVH | 10.7M | 15.9M |

| 47846 | SEDO-AS | 13.7M | 13.7M |

Top 10 Countries

| Country | Host Count | Service Count |

| United States | 295.5M | 391.1M |

| Germany | 53.2M | 60.9M |

| China | 19.6M | 29.5M |

| France | 13.7M | 19.7M |

| Netherlands | 13.6M | 18.8M |

| Canada | 16.9M | 18.5M |

| United Kingdom | 10.7M | 13.9M |

| Russia | 12.7M | 13.5M |

| Singapore | 7.3M | 9.3M |

| Japan | 8.3M | 9.0M |

To find hosts and services which run HTTP/2, we can use the following queries:

Censy Search

Censys EM Platform

- web_entity.instances.http.supports_http2: “true”

What Can Be Done?

- Assess the extent of your HTTP/2 server exposure and reduce your surface area. If you have an HTTP/2 enabled server that proxies requests to a backend server, a possible fix is to temporarily disable HTTP/2 on the frontend until vendors have created a workaround.

- Some effective countermeasures reported by the affected cloud services include combining:

- Utilizing existing DDoS protection methods and services.

- Applying other software patches that may make your HTTP/2-enabled web servers more resilient.