I spent the last few years of my career writing kernel-level detections for an EDR product. These rules ran across hundreds of thousands of devices.

I fell in love with the Research <-> Detection Engineering pipeline. But the detections that ship inside security products are not the same detections your organization must write.

Some problems vendors may have:

- Obfuscated – Very few vendors, save for outliers like Elastic, are transparent about their coverage. How many rules are under the hood? How voluminous is the threat intelligence powering those detections? Does my EDR vendor buy premium intelligence “for me”, or are they just lucky to snag the IOCs from reports? Should Crowdstrike transparently advertise all they cover and where their gaps are, anyway? Probably not.

- Too narrow – These products cannot produce a billion disruptive FPs, full stop. The YARA signatures running on every MacBook are very narrow. Vendors have to ship tight detections if they’re going to take automatic action, like quarantining files or appending to a firewall blocklist.

- Too broad – Hey, you just said they were too narrow! Well, when it comes to informational/low sev alerts, these products will hit your SIEM billing ceiling. It’s up to you to triage and tune.

TL;DR: Cyber product vendors have to cover broadly, but not too aggressively, and slightly opaquely. You have to cover everything, everywhere, all at once. These incentives and needs are not perfectly aligned.

Considering these factors alone, it seems inevitable that detection engineering would balloon as a SecOps function within every organization.

The Day-Zero Normal CISO field brief by Rob Fuller suggests that, post-AI, it’s “critical” that Detection Engineering move left of EDR.

Censys can help. Start with ground-truth Internet infrastructure intelligence. Write detections in our platform and consume the results anywhere, or take the data back to your own workshop and incorporate it into existing practices.

What do we mean by detection engineering?

Detection engineering is the practice of turning threat intelligence, organizational risk, and available telemetry into durable logic that helps your team find malicious or suspicious activity.

In an enterprise or government setting, that means building detections for your environment and adversaries, not some abstract “average customer”.

Your critical systems, exposed services, users, vendors, crown jewels, adversary model, and tolerance for noise are unique.

The goal: convert what you know about threats and yourself into alerts, hunts, automations, and response actions that improve your ability to defend beyond the guardrails you buy.

Where to engineer these detections anyway?

Historic answer: wherever security teams can turn telemetry, threat intelligence, and business context into logic!

That always meant SIEM, SIEM, SIEM. Write a correlation rule, match on logs, generate an alert, and route it to triage.

That still matters, but modern detection engineering is much broader. Teams now build detections in SIEMs, SOAR playbooks, TIPs, EDR/XDR platforms, NDR tools, cloud security products, identity threat detection systems, vulnerability and exposure management workflows, and every flavor of custom pipeline.

Some detections are classic rules. Some are scheduled hunts. Some are canary tokens. Some are automated response conditions. Some are just well-structured queries that run every hour and create tickets when the world changes.

The important shift is that detection engineering is moving closer to the point of attacker contact. Waiting for endpoint execution is often too late.

EDR still matters, but it is no longer the only center of gravity. A strong program detects newly exposed services, strange DNS resolution, risky cloud behavior, suspicious code changes, and attacker-controlled Internet infrastructure before those signals collapse into a malware execution event on a laptop.

One rule might live in Splunk or Sentinel. Another might be a SOAR automation that enriches an IP before escalating. A YARA rule on an endpoint scanner. A Python job that inspects newly observed destination IPs from proxy logs. A Censys Collection that tracks adversary infrastructure and feeds detections into the rest of the stack. On and on. The DE canvas has expanded.

The job is not to worship any one control plane. The job is to put the right detection logic in the place where it has the best chance of firing early, producing useful context, and driving the right action.

How can Censys Internet intelligence help?

In plain English, “Internet intelligence” means turning an external IOCs into a full infrastructure profile.

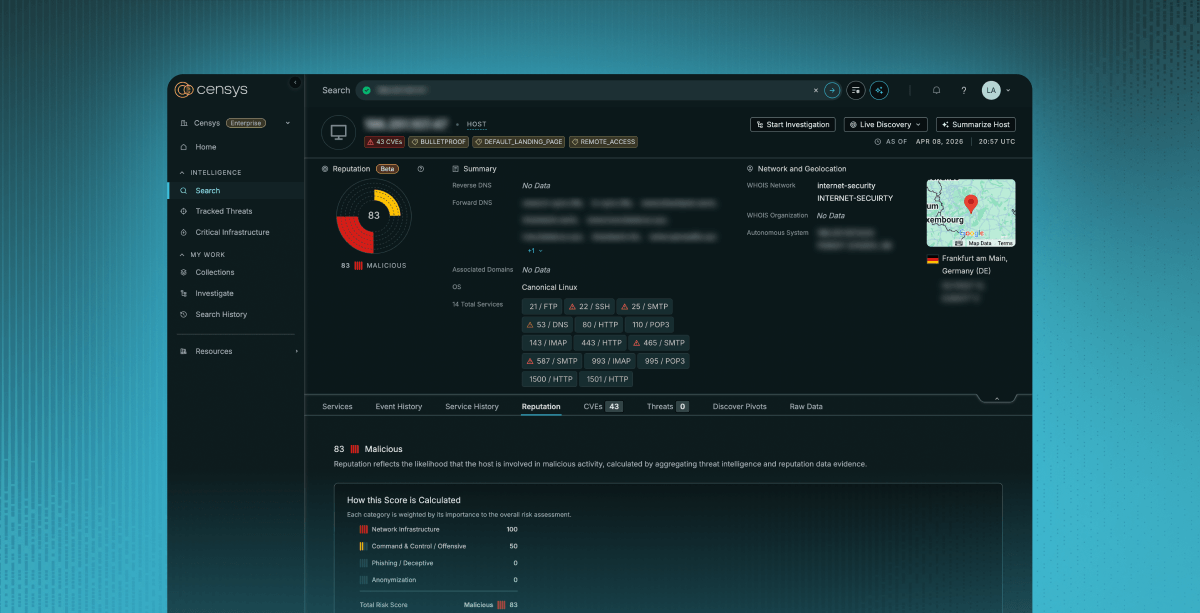

Censys starts with a global Internet Map: raw observations of hosts, services, ports, protocols, certificates, DNS, software, banners, hosting, and historical change. Then we layer on threat and vulnerability intelligence from Censys ARC, down to a numeric risk score.

Whether an analyst or AI copilot is crunching this raw data, they can answer:

- Can I turn {random alert indicator} into reusable detection logic?

An IP, domain, certificate, hostname, URL, ASN, or service may be more than a one-time IOC. Censys helps determine whether it points to a broader infrastructure pattern worth detecting again.

- What infrastructure traits make this suspicious?

Instead of matching only on a domain or IP, detection engineers can look for exposed services, certs, banners, software, hosting patterns, remote access tools, phishing kits, C2 panels, known hacktools, or even just high risk scores.

- Can I move up the Pyramid of Pain and write a higher-fidelity rule from this pattern?

Bye, brittle indicators. Hello, strong signals! Think shared certificates, repeated fingerprints, uncommon protocol combinations, threat-labeled services, or domain-to-host relationships confirmed through active DNS resolution. These sit higher on the Pyramid of Pain because they describe attacker infrastructure, tooling, and tradecraft, not just the disposable addresses they use today.

- Does this rule need tuning?

Detection engineers are constantly tuning noise. Maybe you need to distinguish a parked/sinkholed domain from one that currently resolves to live infrastructure. Or maybe you need to make some adjustments once the threat actor has moved on from that repeated fingerprint you discovered.

- Can I detect the infrastructure before it appears in my environment?

This is “moving left.” Detection engineering does not have to wait for EDR, proxy, DNS, or IAM logs to show contact with malicious infrastructure. Censys can identify exposed attacker infrastructure, risky services, suspicious certificates, dangling DNS, or newly observed domain-to-host relationships on the public Internet. Those can become detections, watchlists, blocklists, hunts, or enrichment logic before your telemetry sees the first hit.

Internet intelligence turns external infrastructure from “random alert field” into detection logic your team can reuse.

How can I leverage Censys data?

Censys helps detection engineers with three principle “features”.

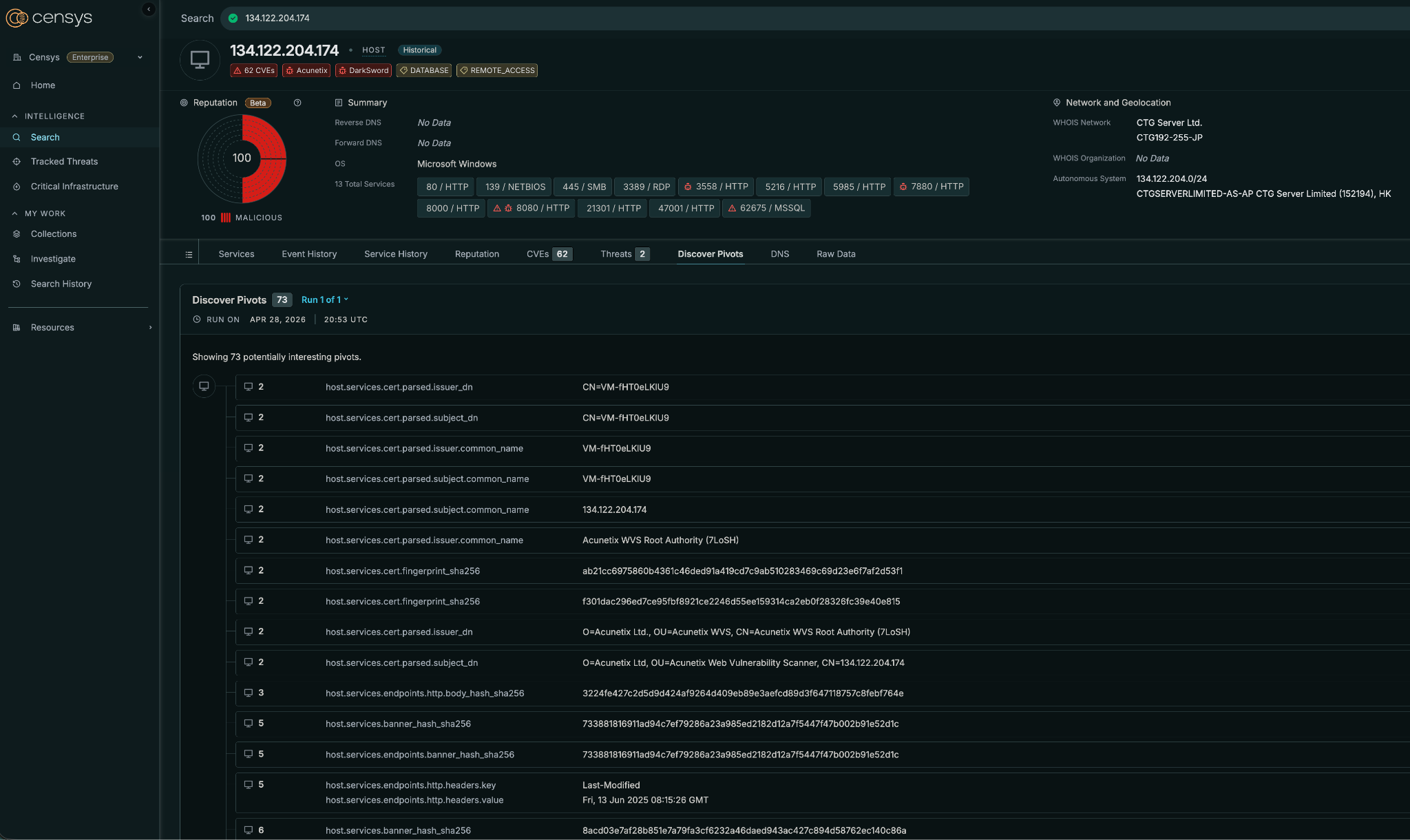

- Pivot from one observable to related infrastructure.

- Rescan hosts to confirm what is live right now.

- Monitor infrastructure over time with Collections.

Then – operationalize detections wherever your team already works. That may mean using the Censys Platform directly, enriching alerts through an integration, calling the API from a SOAR playbook, using an SDK in a custom pipeline, connecting an MCP-enabled assistant, or exporting data out of Censys entirely.

The Rundown:

Pivots

Pivots help turn a single alert indicator into a broader infrastructure pattern. Start with an IP in an EDR alert, a domain from a proxy log, or a service fingerprint, then pivot into related infrastructure traits. This is how brittle IOCs become reusable detection logic. Adversaries recycle quickly, but not always intelligently. One common cert name may hide 200+ phishing IPs for your blocklists.

Live Rescan

Detection engineering depends on confidence. Live Rescan lets teams confirm whether a service, port, banner, certificate, or exposure is still present before writing a rule around it. A signal that was true yesterday may not be true today, and a detection based on stale infrastructure can create noise.

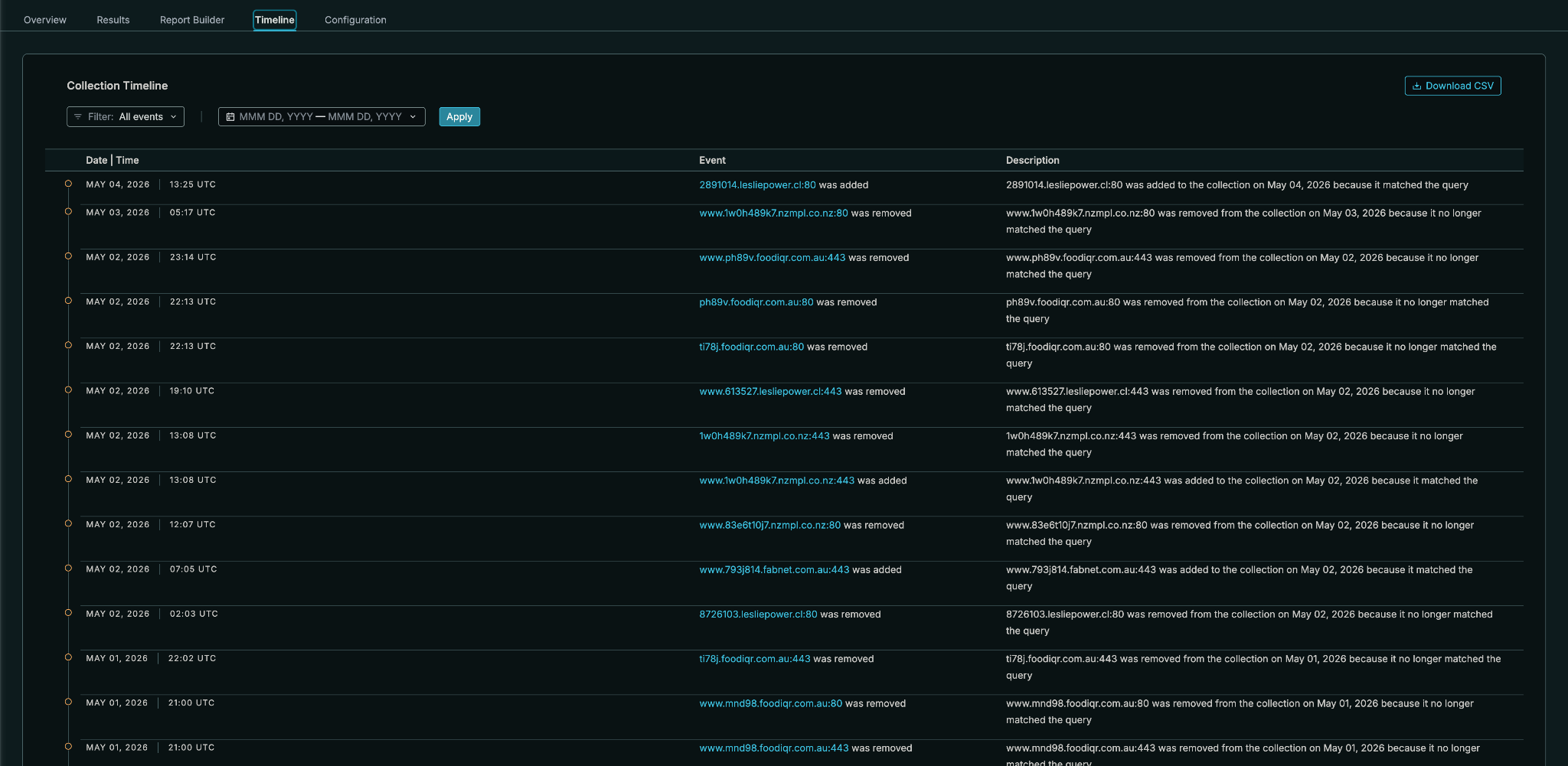

Collections

Collections let teams track infrastructure patterns over time. Instead of treating every query as a one-off hunt, detection engineers can monitor a set of infrastructure conditions and turn changes into recurring workflows. Use them for tuning, or even just a series of mini-feeds.

Threat, vulnerability, and Internet infrastructure shortcuts

Censys isn’t all raw data. Reputation labels give detection engineers context they can use directly in rules and triage logic.

Is this host running known offensive tooling? Is it exposing remote access? Is it associated with phishing, C2, suspicious files, vulnerable software, bulletproof hosting, or risky infrastructure? Is a domain currently resolving to live infrastructure? Has the host recently changed services or certificates? These are the kinds of details that help teams write higher-fidelity detections and reduce noisy ones.

Basically:

The broader point is simple. Censys is not asking detection engineers to abandon their stack. It gives them Internet intelligence they can investigate, monitor, automate, export, and operationalize wherever detection engineering already happens.

Real-world examples: let’s write some rules together

Hey, look, Censys ARC’s Andrew Northern has made some new findings about an Adversary-in-the-Middle (AiTM) phishing cluster, dubbed OLUOMO! That guy rocks. Turn his research into your shield.

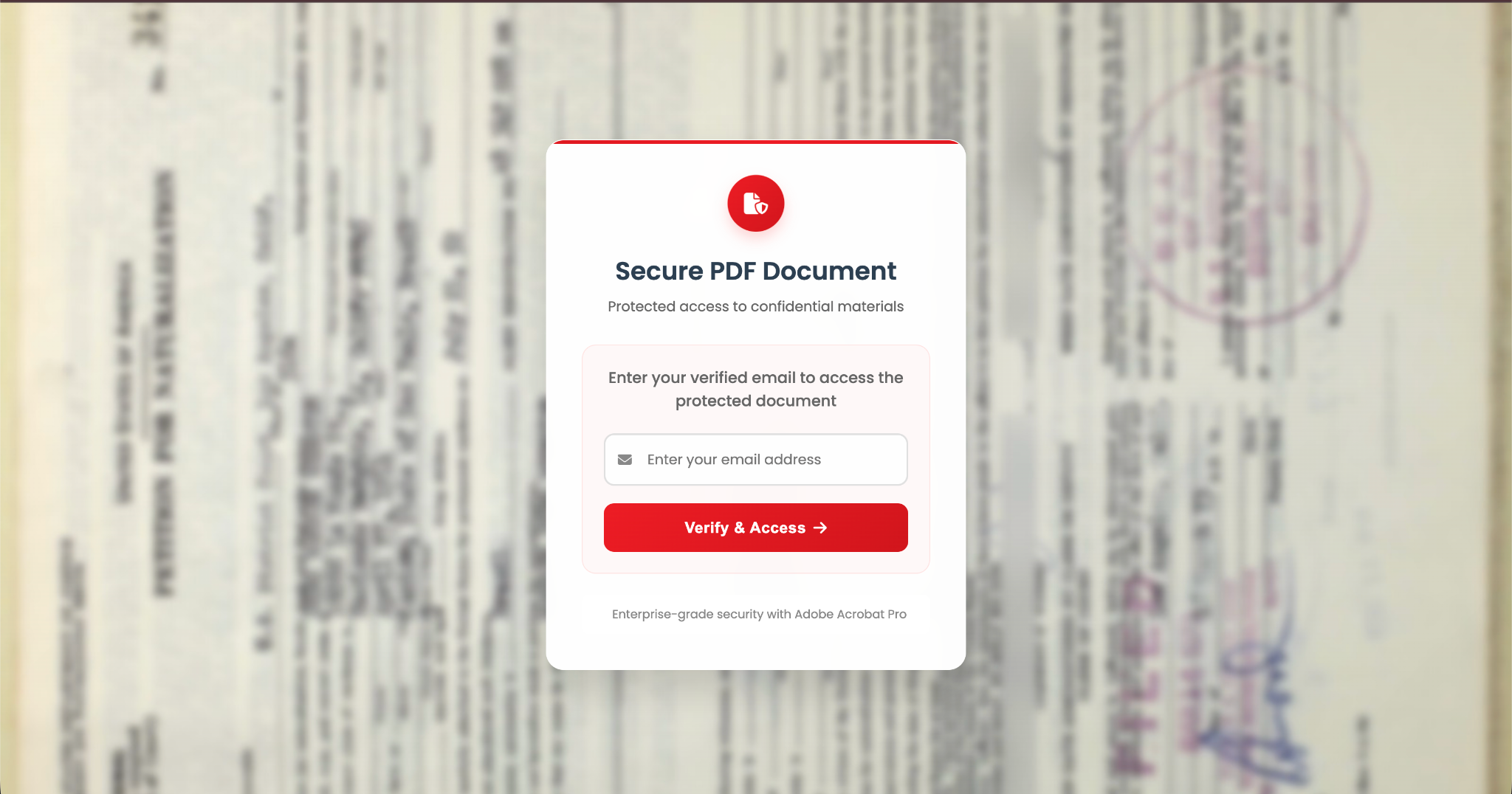

Pictured: The Certificate of Naturalization lure in Adobe phishing context

How to swerve a sub-par detection

The easiest phishing detection to write is usually the worst one.

A detection engineer starts with the reported phishing domain. A user received a “secure document” lure, entered their email, and was redirected into what appeared to be a Microsoft login flow. The first rule is obvious:

Alert when url_domain = "726h53.foodiqr[.]com"

That may catch the exact domain the first victim touched, but it misses the real lesson from OLUOMO. Andrew’s research shows a two-stage AiTM phishing chain: first-stage lures hosted on compromised legitimate websites, and second-stage Microsoft OAuth proxy infrastructure running on Azure Web Apps. The kit used a fake secure document portal, a U.S. naturalization-form background image, a service worker, and an attacker-controlled lookalike credential-routing domain designed to resemble Microsoft infrastructure.

The domain is not the detection. The domain is the seed.

A better workflow starts by opening that domain in Censys and asking: what parts of this infrastructure are reusable?

Let’s do a better job!

For OLUOMO, the answer is not one single observable. It is a kit pattern. The first-stage lure had distinctive HTML artifacts like the title Secure Document Access | Identity Verification, CSS variables, and document-portal copy. The page stored the victim email in localStorage under userIdentity and redirected to a hardcoded second-stage /c path. The second-stage proxy registered /service_worker_Mz8XO2ny1Pg5.js, used the odd redirect_urI parameter with a capital I, proxied Microsoft OAuth content through Azure Web Apps, and routed credential flow through portal.microsoftonline.com.orgid[.]com.

So the detection evolves.

Instead of this:

Alert when url_domain = "726h53.foodiqr[.]com"

Write this:

Alert when a domain, URL, or web property matches the OLUOMO kit pattern:

- html_title = "Secure Document Access | Identity Verification"

- body contains distinctive secure-document CSS variables

- body references "Enterprise-grade security with Adobe Acrobat Pro"

- body uses localStorage key "userIdentity"

- redirect path includes "/c"

- URL parameter resembles "redirect_urI"

- service worker path resembles "/service_worker_Mz8XO2ny1Pg5.js"

- credential routing resembles "*.microsoftonline.com.<attacker-domain>"

- domain resolves to Azure Web App-backed AiTM infrastructureThat is the move from IOC matching to detection engineering.

Translate to a Censys query: discover the exposed kit

Andrew’s writeup shows the Censys-native version of this idea. The initial discovery query combined body content with the HTML title and returned 65 web properties at the time of collection. Broader pivots on CSS variables, animation names, and JavaScript identifiers expanded discovery to 999 unique web properties, though the validated set was narrowed to 88 true positives across 11 parent domains through rendered screenshot clustering.

Rule: OLUOMO-like secure document portal

Search for web properties where:

web.endpoints.http.html_title = "Secure Document Access | Identity Verification"

And body contains two or more:

"--accent-dark: #D1151C;"

"--accent-light: #FF3340;"

"--accent-red: #ED1C24"

"Enterprise-grade security with Adobe Acrobat Pro"

"userIdentity"

"Verify & Access"This rule is not trying to catch one domain. It is trying to catch a reusable lure template.

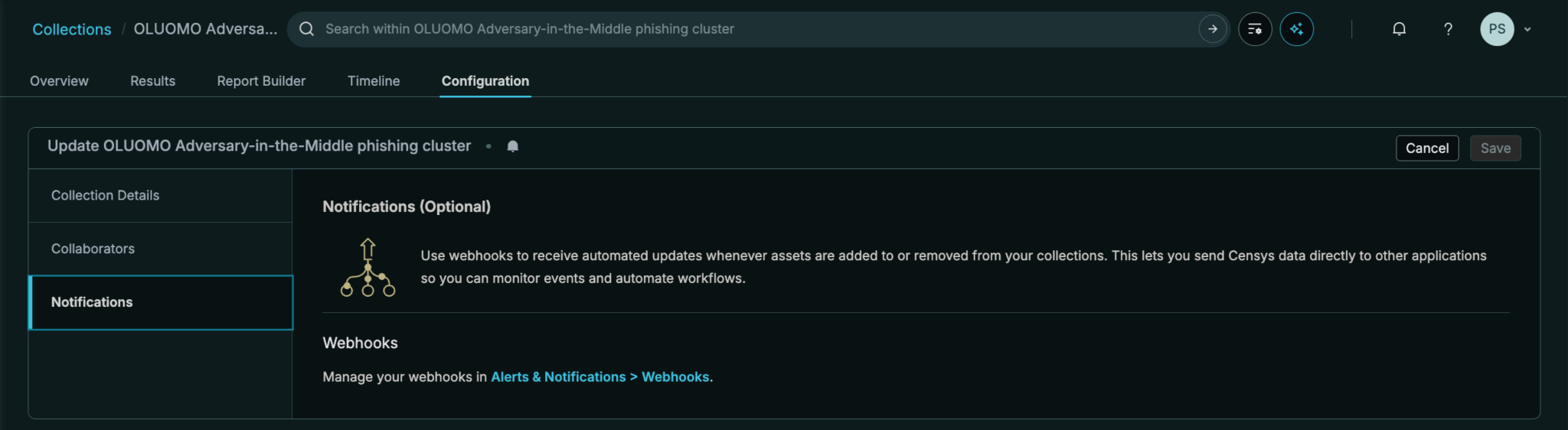

Translate to a Censys Collection: monitor the campaign pattern

Collection: OLUOMO-like AiTM phishing infrastructure

Track web properties where:

title/body matches the secure document lure

OR service worker path matches the known OLUOMO worker

OR body contains the OLUOMO redirect/localStorage artifacts

OR active DNS maps suspicious subdomains to known second-stage infrastructure

OR domain resolves through Azure Web App infrastructure and matches the kit pattern

Monitor for:

new first-stage compromised domains

new second-stage redirect domains

new Azure Web App backends

new lookalike Microsoft credential-routing domains

new Imgur/postimg-hosted lure variants

rapid subdomain churn

This is where Censys becomes more than a search box. The team is no longer asking, “Have we seen this domain?” They are asking, “Has this phishing kit appeared anywhere else on the Internet?”

Continuously push the results to the rest of your stack via integration or webhook.

Translate to SIEM: alert when internal telemetry touches the tracked set

Rule: Internal user touched OLUOMO-like infrastructure

When:

proxy, DNS, email security, EDR, or firewall telemetry contains:

destination_domain IN censys_collection("oluomo-like-aitm")

OR resolved_ip IN censys_collection("oluomo-like-aitm")

OR url contains "/c" AND domain IN suspicious_aitm_redirect_domains

And one or more:

user submitted credentials shortly after navigation

Microsoft login or OAuth flow observed after initial document portal visit

destination is a newly observed domain for the organization

destination resolves to Azure Web App-backed infrastructure

domain resembles a compromised legitimate business website

Then:

Severity = High

Attach Censys host/domain profile

Open identity investigation

Check Entra sign-ins and OAuth grants

Invalidate sessions if confirmedThis rule connects Internet context to internal telemetry. Censys identifies the phishing infrastructure pattern. The SIEM determines whether anyone in your organization touched it.

Shall we write a SOAR enrichment rule, too?

Rule: Escalate suspicious secure-document portals with Censys context

When:

An alert contains a URL, hostname, domain, or resolved IP

Look up in Censys:

current DNS resolution

historical DNS resolution

hosting provider and ASN

live services

page title

body artifacts

certificates

related domains

Censys threat labels

Collection membership

Escalate if:

page title matches a known phishing lure

OR body contains multiple OLUOMO-like kit artifacts

OR domain redirects to a second-stage AiTM proxy

OR domain resolves to known campaign infrastructure

OR host shares service-worker, title, body, certificate, or DNS traits with tracked infrastructureThis is useful when the first alert comes from somewhere else: email security, proxy logs, browser telemetry, identity alerts, or EDR. Censys becomes the decision layer that tells the workflow whether the external infrastructure looks like a known phishing kit or just another random web page.

TIP/watchlist rule: convert the research into usable intelligence

Feed: OLUOMO-like infrastructure watchlist

Include:

first-stage lure domains

second-stage AiTM proxy domains

Azure Web App hostnames

resolved IPs

credential-routing domains

service worker paths

lure page titles

distinctive body strings

Imgur/postimg lure assets

first seen / last seen timestamps

reason for inclusion

confidence levelThis lets a detection engineer hand the output to the rest of the stack: SIEM correlation, SOAR enrichment, proxy review, email security tuning, identity investigation, and incident response scoping.

The best rule, and goodbyes

The best detection is not: Block this one phishing domain.

It’s: Alert when internal telemetry touches infrastructure that matches the externally observable traits of this AiTM phishing kit.

For OLUOMO, that means the sum of its parts: the secure-document lure, the HTML/body artifacts, the redirect structure, the service worker, the Azure Web App-backed proxy layer, the suspicious Microsoft lookalike credential-routing domain, and the domain-to-infrastructure relationships that Censys can observe over time.

Detection engineering is ultimately about ownership. Vendors will continue to ship useful detections inside EDR, NDR, SIEM, cloud, and identity products, but no vendor can fully understand your environment, your adversaries, your exposures, or your tolerance for noise.

Your team has to build the logic that protects your organization specifically. With the right Internet intelligence, a disposable IOC becomes something far more useful: a detection your team can trust.

It’s all about trust.