In this first episode of Censys ARC Flash, Principal Security Researcher Emily Austin and Senior Security Researcher Himaja Motheram break down real-world cyber threats observed across the Internet. From Iran-linked activity targeting industrial control systems to the growing role of AI in modern attacks, this session highlights what’s actually visible, and what it means for defenders.

Emily:

Welcome to Arc Flash, the biweekly webcast from the Censys research team. I’m Emily, a researcher here at Censys.

Himaja:

And I’m Himaja, also a researcher on the team at Censys. These will be webcasts coming out from our team every two weeks where you’ll hear from folks on the ARC research team about a wide variety of topics, about what we’re seeing in Censys Internet-wide scanning data, particularly as it relates to real world events and what that data actually tells us about the broader security landscape.

Emily:

And Censys is at its core an Internet intelligence platform. We have the most comprehensive in-depth Internet map data, and that data is what grounds everything that we’re going to be talking about on this show. So you can look forward to hearing about our original research, news about recent breaches, things that we’re able to track through Censys data, and honestly, just some of the weird, unusual, wild things that we find as we’re scouring the Internet.

Himaja:

Our goal with this show is really to just bring a teaspoon of rationality and groundedness to the chaos and the headlines that you’re seeing every day in security and make this not just about commentary and hot takes, although we will have plenty of those, but to also root things in the data that we’re seeing in the Censys Internet map and what it tells us and what it doesn’t tell us. And that’s the lens that we’re going to apply to everything.

Emily:

And before we dive in, as a heads up, everything that we’re gonna talk about today will be linked in the show notes so you can dig into anything that we cover.

Himaja:

So for our first episode, it’ll be a little bit of a longer segment talking about two things. First, we’re going to dive into a recap of some of the original research we’ve done surrounding the Iran conflict going back to last summer and what we see in Internet scanning data there. And then for the second segment, we’re going to talk about everybody’s favorite topic, which is AI, but from a very specific lens of what it looks like in our data set. So starting with Iran.

We want to start this segment with some context because we’ve actually been tracking this Iran related Internet activity for close to a year now. And it helps to understand that background before we get into the most recent

Emily:

So this particular thread around Iranian threat activity goes back to June of 2025. Obviously, there is a long and storied history of Iranian threat activity, but this particular piece will kind of start, will bookend in June of 2025, which is when the US conducted airstrikes on Iranian nuclear sites. And we started to see the Internet response almost immediately. We saw and measured an Internet blackout in the region. You could see connectivity actually dropping in our data in real time, which was really interesting and one of the first times we’ve really been able to do that. And then around that time, the US Department of Homeland Security issued an advisory warning of a heightened threat environment in the United States, particularly as it concerned critical infrastructure. And by the end of June, CISA and partners had actually released a separate alert specifically around critical infrastructure to remain vigilant around targeted activity from Iranian actors.

Himaja:

Right, so I know that that’s when we on the research team started asking the question, okay, if Iranian actors are going to target US critical infrastructure, what does that attack service actually look like? So can you talk a little bit about that? What was actually the scope of what they have historically gone after? What specific devices did we look at?

Emily:

Yeah, so for this particular research last summer and then again this year when we kind of did a refreshed look at exposures, we looked at four different types of technology that we know we have good visibility into and can kind of speak authoritatively about. And so these are all in the industrial control systems sector. The first I’ll talk about is Unitronix, HMIs and PLCs. So Unitronix, you might recognize this name from attacks on water facilities in the US in 2023.

But they’re an Israeli manufacturer of PLCs and HMIs, and their devices are used across a variety of industries. But again, we kind of see attacks around the water and wastewater sector. They also were kind of infamous for shipping with default credentials of 1111, which is obviously not a great thing, particularly when you’re sitting on the open Internet, right? The next is an interface called Orpac Site OMAT.

Himaja:

Classic.

Emily:

And this is again, an Israeli company that provides a lot of things related to oil and gas, but this particular component is fuel station automation and fleet management. And it’s a web interface. It also ships with a default username and password, which you can find in those docs. The third technology that we looked at was Red Lion. Red Lion is a US-based company that specializes in a lot of things, but… HMIs, meters, controllers, again, all used in this automated or industrial environment. And they’re used across a lot of different sectors, which is kind of interesting. So water and wastewater, oil and gas, you kind of see them across. And RedLine Crimson is actually the configuration software for their controllers. And this is interesting too, because it’s got this drag and drop interface that makes it really easy to program these systems. And the final component that we looked at was Tritium Niagara framework.

And so this is another US company. The Niagara framework is heavily, it’s a building automation framework. And it’s used to control, for building administrators to control things like lighting and HVAC and alarm systems or security systems. And so these were things that we knew we had good visibility into. And after looking at them last summer, we started thinking, well, okay, where are these now? Like, what do we see when we look at these now?

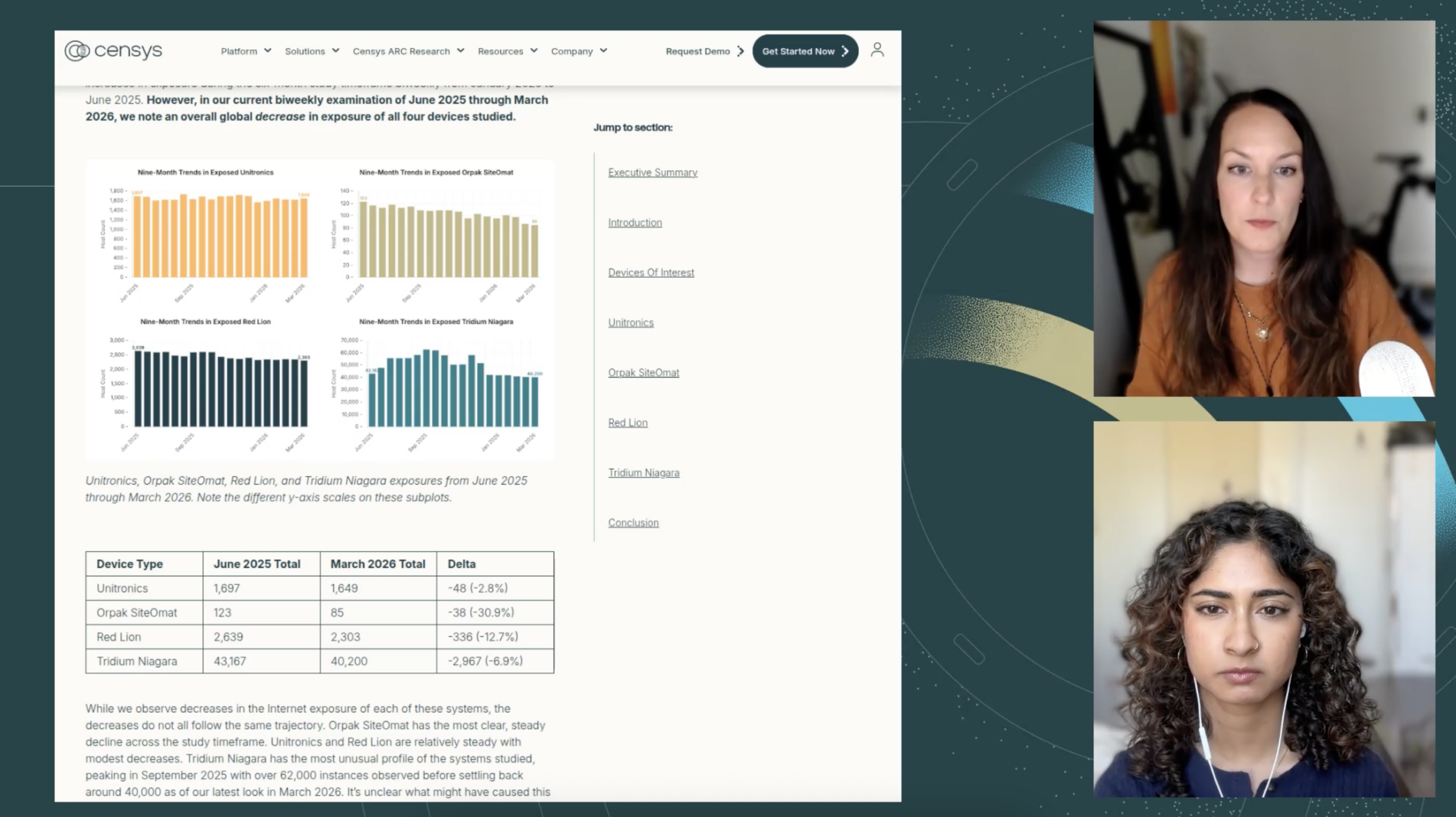

I think the good news, if you want to call it that, I will take any opportunity I can to say good news in the security world, is that all of these systems actually decreased in the time since we last looked at them to March of 2026 is when we last looked at this. And some of them quite substantially, some of them a little more subtly, but I think the big picture is largely, there was a decline, whether subtle or not, in the exposure of these particular devices.

Himaja:

Yeah, that type of decrease was actually really interesting to me because we do see these sorts of fluctuations of like a thousand or so devices up and down on the Internet quite a bit in our data set, but something very slow and steadily declining like that over a year really points to some sort of intentional either taking them offline or some other visibility change rather than some random noise. Is that safe to say?

Emily:

Yeah, I think so. I think it’s hard to make causal statements with our visibility. Like we just see that it’s on or it’s off, right? Like it’s online or it’s not. And we can go back and look at timelines. And if there’s a dramatic drop, we could say, well, maybe there was, and there’s also some sort of kinetic activity or we see something later that’s come out around that time. We go, okay, well, maybe this is a little bit of explanation. But then, yeah, when you see that sort of gradual.

Decrease in these systems online it does kind of point you more toward this notion of like well Maybe people are just taking them offline because they know that’s the right thing to do to protect these systems

Himaja:

We can hope, right? That would be great.

Emily:

Yeah, no kidding.

Himaja:

So the other thing that I was curious about with this one is I saw that Tridium Niagara, those devices, the scale was a bit different from the other ones where we still have over 40,000 Niagara systems exposed globally. And Niagara is the framework that building administrators use to control things like lighting or HVAC or door access and stuff like that. But something that I’ve heard people say is, like what a building management system, doesn’t really, that threat model is different. What’s the worst you could do from exposing a building administration system? But there is a threat from those, isn’t there?

Emily:

For sure. And like, I don’t want to conflate this with, you know, a very serious, you’re, you know, cutting the power off to an entire city kind of situation. But we know that in the last, you know, six months or a year, threat actors have actually taken advantage of some of these systems, these building controller systems that we kind of tend to think of as like, this is not really that bad. And have gone in and, you know, been able to pull footage from their security cameras, lock doors, turn off lights. And then if you think about this in the context of like, maybe a medical facility or facility where, maybe that doesn’t have generators or something like that, that could get kind of questionable. But yeah, I mean, it’s really sort of about flexing that, you all we found these things and we’ve done this attack. And granted, it’s not, again, like particularly, I hate to use the word sophisticated in this context, but it’s something that they’re able to do and sort of flex on a little bit and sort of kind of have that momentum of like, we’re going after these entities in this, you know, rival country or rival region and we’re taking their buildings or facilities down.

Himaja:

Yes, and I also think that people underestimate the sort of recon aspect of these devices where, yes, a lot of it is like braggadocio — great word — or things like, hey, like over claiming, but there is value still in simply establishing persistence in a device or establishing monitoring over a device without immediately causing disruption. We see this in a lot of the critical infrastructure sector where threat actors will just kind of map things out and do recon and gather data that are leaked by these interfaces. And that’s enough for them to kind of map out a plan and put themselves in a position to cause disruption in the future if they wanted to, even if not immediately.

Emily:

Yeah, absolutely. And when we think, too, about — not to get too heavily into this — but network segmentation sometimes not being a thing, that opens all kinds of interesting doors literally and figuratively in situations like this.

Himaja:

Yeah, absolutely. So then that was in March that we took a look at that data. But then I know that in early April, there was a joint advisory from the FBI, CISA, a bunch of other three-letter agencies that talked about how Internet-facing Rockwell Automation and Allen-Bradley PLCs, which are devices that run a huge proportion of US water, energy, and manufacturing infrastructure, were also being actively exploited by Iranian affiliated actors. And I know that we immediately went and looked at Censys data for this as well. So what did we find?

Emily:

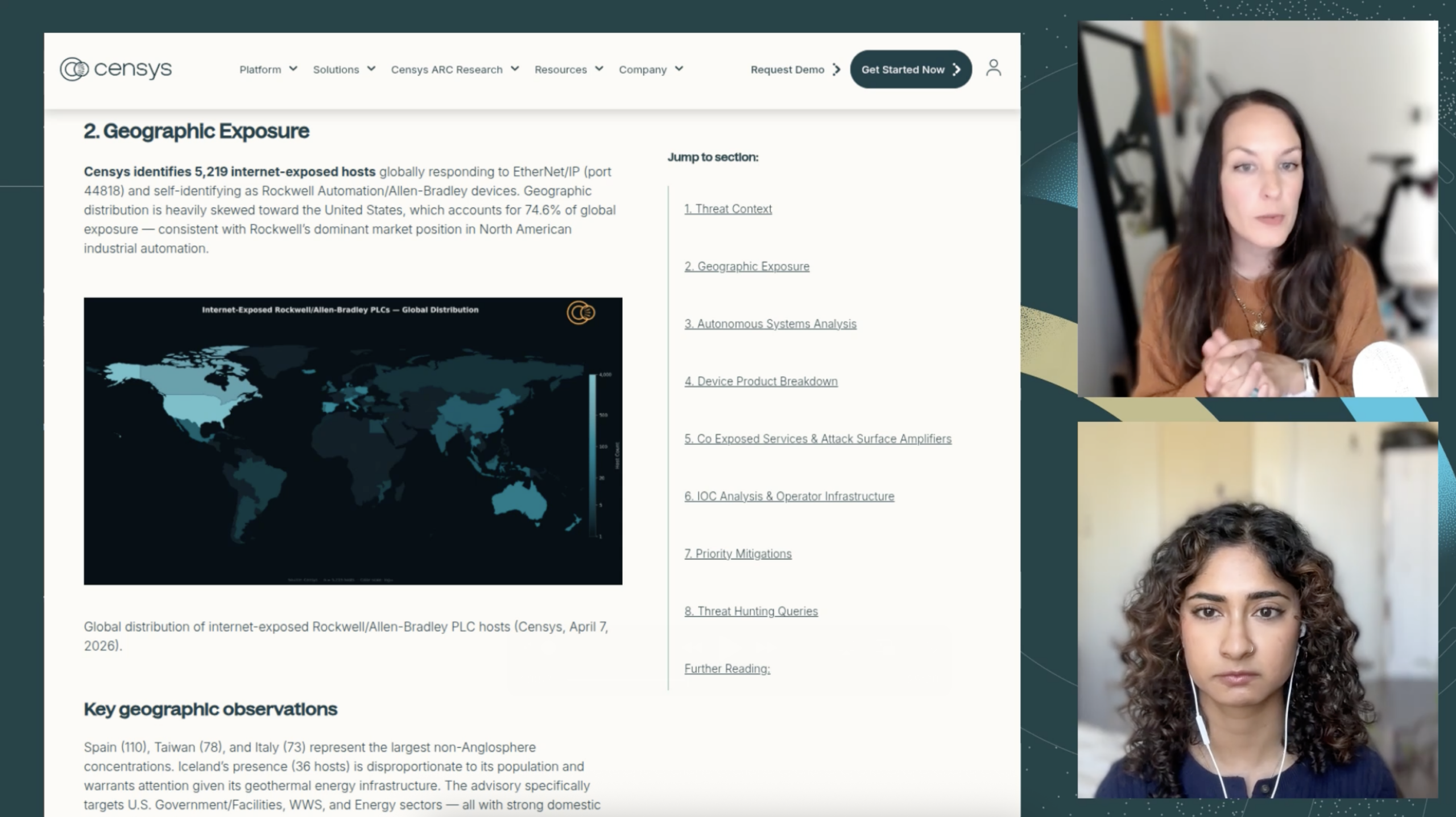

Yeah, so this was kind of a perfect situation for us to go and figure out sort of what is the attack surface here. And what we found at the time was that we saw just over 5,200 Internet exposed hosts that appear to be Rockwell or Allen Bradley devices. So the United States is a huge proportion of that, 75 % of them about. And that’s a lot of critical infrastructure just kind of sitting on the Internet. And here you can see, you know, really skewed towards North America, a little bit Australia as well. But yeah, primarily the US is where we see quite a lot of this.

Himaja:

So almost 4,000 hosts just in the US. Who’s hosting those? Which networks are we finding those in?

Emily:

So if you’ve heard us talk about critical infrastructure or industrial control systems at any point in the past, you’ve probably heard us talk about this and won’t be a surprise to you that the majority of these hosts, actually globally, about 50 % of them globally, but particularly in the US, are found on Verizon’s Selco part network. AT&T Mobility is another kind of top network there. And these are interesting because these are mobile networks.

And we tend to see this again in industrial control systems. And this sort of indicates that these are like field deployed devices. They’re not necessarily somewhere they can have like a wired Internet connection. So we do tend to see lots of ICS devices on these types of networks. So they’re in, know, pub stations, substations, water facilities. And that’s interesting not only because there’s such a high concentration of them on these networks, but it also makes determining who owns them really, really challenging because these hosts all just kind of point back to Verizon, to Selco part. So that makes notifying an owner really challenging. We’ve dealt with this multiple times in the past and it’s tough. So we don’t really know who owns these, but we know that they are sitting out there. We know that they are on the Internet.

Himaja:

Yeah, I’ve run into this a lot in my own research seeing that especially for devices that are located in rural regions or places without a lot of connectivity, a lot of times the only options are to host on these either these big ISPs or these maybe more regional niche ISPs that I don’t have the resources to really respond to requests like this where it’s like, can you help us get this critical infrastructure footprint offline? Or just off the public Internet, right?

So I know when we looked at this story in particular with Rockwell, we noticed that it wasn’t just the PLCs themselves that were exposed, right? What else did we find running on those hosts?

Emily:

Right, so we don’t just see these Rockwell devices just kind of sitting on their own on the Internet. They also often have these other services on them, which is not unusual, but it does make the attack surface a little different. So not surprisingly, we do see a web interface over HTTP. VNC is the one where I start to kind of like, that doesn’t feel great. That’s a remote desktop.

VNC sometimes is really easy to get into and it’s just sitting there. So that’s sometimes not the most ideal situation. And then we also see several other types of services that tend to be co-located on hosts with these Rockwell, Helen Bradley devices. So SSH and FTP. SNMP is another one that’s a little bit interesting and really shouldn’t be out there. Modbus, Telnet, Redline, Crimson, like all of these things ideally probably wouldn’t be.

Himaja:

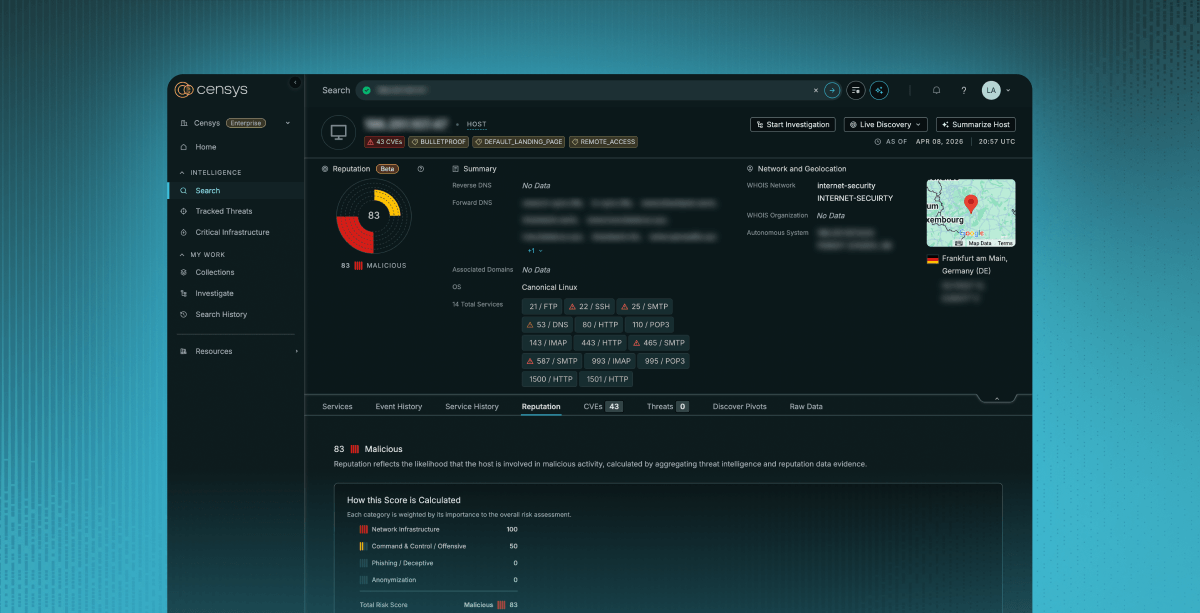

So one of the things I love about our research capability is that we can kind of help both sides of this equation. We can look at the scope of vulnerability and the footprint of what is actually exposed. And then we can also take IOCs about the actual threat actor and adversary infrastructure and then map that out as well and see the whole picture. So I know we did that for this because in the advisory from CISA and others, they provided eight indicator IPs for the infrastructure. And I know that we actually went in and dug in, found patterns with them. So can you talk about that?

Emily:

Yes. So what I think is really interesting, like we just talked about this exposure piece, but I think what a lot of folks missed about this advisory and like, I didn’t really see this talked about a lot when this came out was, know, CISA published those initial eight IP addresses as IOCs. And so we took those and we’re like, let’s go see what else we can find that looks potentially related, right? And sometimes that yields… sometimes there’s nothing. Sometimes that’s the exhaustive list and we just don’t really see anything else. But in this case, we actually found four additional IP addresses, same autonomous system, same windows image, same RDP port, similar certificate, same timeframe of uptime and appear to be related operator infrastructure. And so we included those in our report as well. So if you’re a defender, you probably want to roll those four IP addresses as well into any kind of detection or blocking mechanisms that you have, because they do appear to be closely related to the indicators that CISA published.

Himaja:

Yes. So if I’m an OT defender, if I’m somebody who works in ICS security and I’m wondering how can I take an actionable step to protect my org besides, you know, monitoring logs and blocking the IPs that we know to be malicious, what else can I do with this data? What should be my priority right now?

Emily:

So well, I mean, this is even separate from the data. But this is the most, this is the boring hygiene thing that we talk about anytime we talk about ICS, which is like these things shouldn’t be on the public Internet. Like 9.999 times out of 10 should not be on the Internet. So that’s a first step, like disconnect them if they don’t need to be there. And they probably don’t. The second thing in particular for these devices, if there is a physical mitigation in this case, so for controllers that have a physical switch, CISA recommends that you put it in the run position, which will prevent any sort of remote overrides from happening. So that’s another step you can take. We’d also recommend using that expanded IOC list. So take those eight that CISA gave you and then take the additional ones that you’ll find in our advisory. Again, we’ll publish those in the show notes. Take those and add those to your block list.

Himaja:

It’s crazy that we still have to tell people to take their stuff off the Internet.

Emily:

Also, no default passwords. Maybe that doesn’t apply specifically in this case, but like in some of the things that we’ve looked at previously, default passwords are just — for everything ICS or not — like probably shouldn’t be doing that.

Himaja:

Just add a percent symbol to the end of admin. Please, for me? Just do “admin%.” “admin11%” — fine. That’s all you have to do. Security!

Emily:

That’s it. That’s the solution.

(Just to be clear, we’re totally kidding. This is not real advice.)

And if you just want a quick and dirty way to see what your own Rockwell or Unitronix or other PLC exposure looks like from the outside, you can use the query that we provided in the situation report that will be in the show notes and just append your IP blocks or your ASN and you can use that to figure out right away whether you have something to worry about.

Emily:

Okay, so let’s talk about AI and security, but let’s do it a little differently than what you’ve probably been hearing over the last few weeks because the coverage has been plentiful and very dramatic with a product named Mythos. How could it not be dramatic, I guess? But we want to try to bring a little bit of groundedness and sort of calm to this conversation. So let’s just start with talking about Mythos because I think that’s the thing everybody is really talking about in some context or another.

Himaja:

Yes. So unless you’ve been living under a rock, you will have been subject to the discourse about Mythos, which is the new frontier model from Anthropic, Claude Mythos. They claim that it is so capable at finding vulnerabilities in software that it is too dangerous to release publicly. And so they’ve released it as a preview and announced something called Project Glasswing, which is a coalition of all of these big tech software companies where they’re allowed to use the model for defensive security work and sort of get a head start on what is foretold to be a vulnerability exploit apocalypse where everybody is doomed and nothing will be fine ever again in security and we all should all just lie down. I am in support of the idea that we should all just lie down, but I want to actually talk about some of the real world events that we’ve seen around this, some of the evidence that we’ve seen, and sort of decrease the FUD around Mythos and actually talk about what does exploit intelligence and what do cyber attacks about AI look like in the real world.

So I think the biggest news story we’ve heard about Mythos in recent weeks is that Mozilla reported that they used the Mythos preview as part of Project Glasswing to scan the Firefox browser, and they found 271 bugs using the tool and patched all of them before the release. But what I want to really highlight here is that, Missilas CTO himself said something like, so far we’ve found no category or complexity of vulnerability that humans can’t find that this model can’t. Right? So I just want to emphasize that this isn’t magical. It’s just an acceleration of what already would have happened.

Emily:

I think something that’s really interesting, his comment about this model isn’t finding things that humans also couldn’t, I think is very telling because there’s a lot of hand wringing over it and it’s sort of like, hold on, maybe it’s not quite as serious as everyone is thinking. There’s this whole notion that it’s sort of marketing hype and that very well could be true, but I think hearing from someone whose team has been hands on with it, I think adds to that as well as like, hold on, let’s kind of take a measured approach and let’s not panic just yet. Let’s be prepared, let’s be realistic because I do think one thing that’s going to be a little bit concerning is, you know, your bug triage team ready for the onslaught of vulnerabilities, the tickets that are going to get opened for your security team, frankly, to fix. That feels like a really big risk to me. You know, you’re going to unleash one of these models on your code and now you’re going to have all of these bug tickets and it’s like, okay, now we’ve got like this huge backlog. That feels kind oflike a big deal. But in terms of finding bespoke, like all of these things, I think that’s just a little bit… it feels a little overstated to me. I don’t know.

So if that’s not really how we’re seeing threat actors use these capabilities, what are we seeing? Like, can we paint a picture of how this is actually, how AI is actually being weaponized? Like, there’s been so much to do about it’s making phishing easier, it’s making all these attacks easier, but like, what are we actually, what can we actually see and say about how it’s being utilized in attacks now?

So along these lines, the Vercel breach has been in the news this last week or so. And there were some rumblings that this was potentially an AI assisted attack. Can you talk more about that?

Himaja:

Yes, so for context, Vercel suffered a breach in the past week where the threat actor actually compromised Vercel employees third party AI agent tool. The tool is called context.ai and they took that one compromised account and used it to pivot into the employees Google workspace account and from there go into the internal Vercel infrastructure and decrypt environment variables and steal credentials, et cetera, et cetera. And some folks on the Internet were speculating that the attack might have been AI accelerated based on how quickly it was carried out and also claims that the attacker seemed to demonstrate a very in-depth understanding of Vercel and its internal infrastructure.

So I won’t dispute that maybe AI was used to do this attack. There’s no real evidence to the contrary or to support it. We don’t know that much yet. But I want to look at the facts again here. So the initial access was through a third-party AI tool with OAuth access to a production environment. Granted, the people who were affected by this attack were people who had environment variables that weren’t marked as sensitive. So it was

The things that were stolen were things that already weren’t being protected properly as a note. But also, this is not an exotic zero day. The attack service here was stealing credentials through a third party supply chain piece of software. And so I think that, again, is another supporting story for this idea that the same attacks are being done, the same techniques are being done, maybe at a quicker velocity. But what you do as a defender, don’t think that story does not change a huge amount.

The takeaway is that the scary AI Mythos is not going to find categories of bugs that humans can’t. It’s not going to change the attack surface in ways that are completely unimaginable to you. It’s just going to exacerbate these problems that we’ve been talking about for years and years and years and And it’s part of this this kind of new supply chain surface, but the methods are exactly the same as we’ve been seeing forever.

Emily:

Yeah, I think that’s kind of the big piece here is like, it’s just sort of expedited in a lot of cases. We’re not seeing anything particularly new or novel, at least that I’m aware of. But what we’re just seeing is kind of a proliferation of it and faster. And that’s something that, yeah, we can’t discount that. But I think we also need to sort of separate a little bit of the hype and the fear mongering and the FUD around it and sort of go like, well, hold on, wait a minute. What are we actually seeing?

And again, not to discount the fact that there’s a lot more people getting into the game potentially, because it’s really easy to vibe code an SSH brute forcer now. Like it’s still an SSH brute forcer. It’s supply chain. It’s third party. It’s having your creds stolen. And it’s the same types of things, but just with a little bit of assistance.

Himaja:

Yes, and I think the supply chain part of the story is the story that will change the most, right? Because you have this new layer of AI tools that we see in Censys all the time. We see over 900 hosts that are exposing the ./claude directory that are leaking it internally on the open Internet. And we’re seeing tons of things like rags and LLM front-ends for role-playing with a character and ML workflow automation tools and all of these huge, like there’s this whole new category of software that we’re just seeing being really misconfigured.

But I think the danger that comes with it is for some reason, the trust model with these agents is so different. Like it seems like people, users are so much more comfortable just handing over their entire… like handing root to an AI agent or handing over access to their files to an AI agent that they would think twice about doing for any other category of software. And I think that’s the thing to worry about is that the supply chain risk is kind of advancing at an exacerbated rate, but with this distorted trust structure.

Emily:

Okay, so that’s actually something that has been wild to me as we’ve seen this and even in my own work, right? I’m noticing like, giving permissions to apps is not something that — I think, if you’re security minded/privacy minded, you’re like, no, no, no, don’t need this. But I’m seeing people just blindly — not blindly — but just like openly hand things over to Claude Code or to these other, you know, interfaces where it’s like here, yeah, again, have root on my machine. Like what happened to this like…

least privileged model that we used to operate it or what happened to us. It’s it’s suddenly really changed how we interact with different technology. And like, we’re just sort of giving the keys to the kingdom to these tools and hoping that, you know, hoping for the best. And not to say that they can’t do really cool things when given those keys, but wow, now they have the keys.

Himaja:

Now they have the keys and they’re not being monitored in the same way as other things are. And I think it’s totally a psychology thing, right? We always say the next layer of the OSI stack is the user and it’s really coming into play here. Especially with things that are marketed as like productivity tools or like automation tools. I see this a lot more where people think, you’re helping me be better at my job. You’re helping me be more productive. Here, have everything, have my life story.

Emily:

Have my inbox, have my Google workspace, have everything, and I’m sort of like, wait, really? I still feel a little weird about this. I’m not anti-AI, but I’m sort of like, are we really doing that? Okay, I guess, sure. But yeah.

Himaja:

Yeah, it’s something we’re going to continue to see play out, but I think the takeaway here is that it’s not going to be an apocalyptic doomsday reality.

Emily:

Not yet.

Himaja:

Not yet. When it can do my laundry, maybe then I should be scared.

But the conversations that we’ve been having for the past decade are still relevant. And I think that to memeify this, we have exploits at home.

Himaja:

So TLDR, we have these god-tier AI models that are claimed to be able to find zero days in pretty much everything. And then on the other side, we have nation-state contractors continuing to exploit critical infrastructure with no zero days in sight, no zero days needed. And so I think that the bottom line is that the attack surface for the Internet will continue to expand into places we are unfamiliar with and places we haven’t hardened yet. And we’re going to keep seeing that play out, especially with the new AI tooling. But while that happens, we are monitoring it in Censys, the data to see it all exists. But the question is whether you know what you have in your environment.

All the research referenced in this episode is linked below. Also, much of the research that we’ve referenced is also available on the Censys blog.

Research and References

Iran / ICS

- ARC Situation Report: Iran-Affiliated APT Targeting of Rockwell/Allen-Bradley PLCs

- ICS & Iran, Part 2: Revisiting Exposure of Previously Targeted Devices

- Joint CISA Advisory: Iranian-Affiliated Cyber Actors Exploit Programmable Logic Controllers Across US Critical Infrastructure

AI

- Vercel April 2026 Security Incident

- Hackers Weaponize Claude Code in Mexican Government Cyberattack

- Mozilla Mythos Project (AI for Firefox bug discovery)

Live attendees get access to an exclusive live Q&A — register now to get your questions answered by Censys ARC researchers.